Friday, December 29, 2017

Thursday, December 28, 2017

My kind of photo – WILD! Lions, and thunder, and dragons – Well, lions anyhow (one of a matched pair)

I love shooting into the sun, and I was certainly doing that here. It was late afternoon – in the topiary garden at Longwood Gardens outside of Philadelphia – and the sun was low in the sky. I was shooting that lion, one of a pair. I have no idea what I was thinking when I took this shot. Probably something like, WOW! the SUN, the LION” And CLICK, it was done.

I doubt that I could see much through the view finder. The direct sun was simply too strong. I just pointed the camera in the right direction and hoped that the shot was reasonable framed. And I was. Luck and instinct.

The image as it came from the camera was rather dark and ‘flat’. That’s what happens then the camera is flooded with light. The sensor all but shuts down in ‘self protection’.

Consequently it took a bit of monkeying around to pull a reasonable image out of the photo. Of course, there’s no one way to do this, if you’re going to do it at all. What kind of image do you want? I like that the lion is reasonably well-defined, I like that a lot. And the lens flair, it must touch about half the image, upper left and lower right. With all that light in there there’s no pretending this is anything but a photograph.

Of course, no photograph ever presents the world AS IT REALLY IS. Whatever photorealism is, it isn’t the world as it is. Realism, in photography, as in painting, or writing, is just another style, another way of interacting with the world. Photos such as this add a bit of delirium to the mix.

Women posing for a photographer and having (delicious) fun [#naked]

Ove four years ago I went to an exhibition of photographs by the Japanese photographer, Nobuyoshi Araki. Many, but by no means all of them, were of women, often naked, sometimes outrageously so, and sometimes intricately bound in what, I gather, was a traditional Japanese style – though not the sort of tradition widely on display. I wrote three blog posts about Araki, one based on a documentary about his working which included footage of photo sessions with female models, many/most of whom where not professional models. It was fascinating stuff, especially his (often jovial) relationship with his models during a photo shoot.

I was reminded of that while reading about a Chinese (art) photographer who died this year, Ren Hang (Jenna Wortham In The NYTimes). Here's the first paragraph:

Jun Sui didn’t plan to get naked for the Chinese art photographer Ren Hang. But as the day wore on, she loosened up, and the clothes came off. Ren’s shoots could become electrified with the illicitness of being nude, especially when they were in public, where such activity in China guarantees arrest or worse. The atmosphere of adventure created trust, as did the spirit of rebellion. “There was a sense of being free,” Sui told me. In person, Sui is demure, inconspicuous; in Ren’s photographs, her eyes blaze from her crouch between two upright women, her arms snaking between their thighs. “We hide the body in our culture,” Ren once said; in China, it is “a demoralization to show what they think should be private.” Ren, Sui said, encouraged everyone around him to shed that conditioning.

Yes.

“He repeated over and over that nothing was ever planned for photographs,” said Dian Hanson, an editor at Taschen who worked with Ren on a monograph of his photographs that was published earlier this year. “Nothing was planned in his life. He was determined to live in the moment always. There was no thought for the future. In retrospect, one sees that as a plan not to be around for very long.”

While you're at it, you might also look at this brief portrait of Jane Juska, by Maggie Jones.

Also, this post about a Japanese photographer, Nobuyoshi Araki.

Monday, December 25, 2017

The world of children's competitive dance

Several years ago I was hired to take photos at one of these competitions, held in suburban New Jersey. Here's my report, Dance to the Music: the Kids Owned the Day.The children who enter these competitions train up to 30 hours per week, primarily on weekends and after school. Because children must compete in many styles — hip-hop, ballet, jazz and others — versatility is essential, and training can be rigorous to the point of extremity. Each competition bestows its own regional titles, and bigger events also offer national ones. Studios choose which competitions to attend based on careful consideration of cost, quality and competitiveness. Some students compete nearly every weekend during the season, which runs approximately September to July, and train at intensives and classes during the rest of the year.There are no official figures about how many children are involved in competition dance nationwide, but the number of national competitions has ballooned into the hundreds since the 1980s. In the late 1970s, one of the first of the organizing companies, Showstopper, held competitions out of the trunk of a station wagon. Last year, 52,000 dancers participated in Showstopper, and its touring fleet included a semi truck that transported trophies alone.A turning point came in 2011, when Lifetime aired a reality show called “Dance Moms.” A number of dance-themed reality shows premiered in the previous decade — “So You Think You Can Dance,” “Dancing With the Stars” — but “Dance Moms” focused on relatable kids who aspired to be famous for their dancing, not adults. The show followed a Pittsburgh competition team at the Abby Lee Dance Company, reporting breathlessly on the wins and losses suffered by its team of preteens. “Dance Moms” emphasized drink-sloshing and hair-pulling by the team’s parents rather than the particulars of the students’ lives, but it made several young dancers, particularly Maddie Ziegler, now 15, into minor celebrities. The competition community almost unanimously considers the show in poor taste, but it normalized the idea of child stardom among competition-dance students, teachers and parents. When she was 11, Ziegler was cast by the musician Sia in a music video for her song “Chandelier.” The video featured Ziegler as the sole performer, doing pirouettes, splits and kicks with a series of fierce facial expressions.When I started dancing professionally four years ago, dancers I worked with would sometimes make one another laugh in rehearsal by whipping out old competition moves: preposterously wide smiles, coquettish shoulder tilts. As adults looking for dance jobs in New York, they had hurried to leave these overblown faces behind, like a newscaster trying to scrub herself of a regional accent. They wanted to be modern dancers, and maximal facial expressions aren’t stylish in the world of concert dance, which is still the purview of college dance programs and conservatories. When competition dancers enter college or seek jobs in the modern dance world, they tend to tone down their “fire,” as one former competition dancer put it, to fit in. She was a national-competition titleholder while in high school, but now she treated her competition past like a secret. She wanted to join a modern dance company, and competition dance is often considered better suited to music videos, concert tours or cruise ships. She felt that some of the companies she wanted to join, which performed exclusively in theaters, looked askance at her background. As mainstream as it has become, competition dance is still a distinct dance subculture, revolving around pop music, hard-hitting choreography and young female adherents. “It’s a different world,” Melinda Wandel, a mother of an 11-year-old competition dancer, told me.

Saturday, December 23, 2017

Saturday Ramble: Ethical criticism and too much to do [Ramble 10]

“Deck the Halls with Double Joy” –

It’s early Saturday morning, December 23, 2017. In a couple of hours I head off to Philadelphia to spend the Christmas weekend with my sister. Monday, Christmas day, we’ll visit Longwood Gardens, a DuPont estate south of Philadelphia with magnificent grounds and a wonderful conservatory.

Lots to do. The Bergen Arches project is going to heat up in the new year. Who knows where that will lead.

And I’ve got a lot of writing to do, some in connection with the Arches project, but most in connection with my intellectual life. On high priority:

- A working paper about the direction of cultural evolution and cultural evolution as force in history.

- I want to say so more about the problematics of the text, about how standard lit crit bridges the gap between signifier and signified with intention, but that naturalist criticism has actual mechanisms on offer. And how the vague intentional text (what I’ve been calling the interpretable or hermeneutic text [1]) of standard lit crit leads to the transcendental critic who treats the world as a text. Not good.

- What about ethical criticism? Just what does it do?

I’ve got some quick thoughts about ethical criticism.

First, a 21st century ethical criticism must discard that vague intentional text, the one with hidden meanings that must be closely read. It’s useless artifact serving no purpose but to prop up a now moribund conception of literary commentary.

Second, back in the mid-1950 when literary criticism explicitly disavowed, well, criticism, the cultural world was a different place. The literary academy was resting secure in the implicit assumption of nationalist criticism where the cultural valence of the nation, or each nation, was secure. That fell apart in the 1970s and 80s. Now we’ve got a proliferation of identity criticisms. That’s one thing an ethical criticism needs to deal with.

Third, consider this, the final paragraph of my first “Kubla Khan” essay [1]:

The hermeneutic critic is, ultimately, asking: What is the meaning of life? What is man’s place in the scheme of things? What does this text tell us of that scheme? These are not properly scientific questions and we should not expect a science of man to answer them. But that science must answer closely related questions: What is the nature of the human mind such that it continually inquires into its own nature, into its place in the world? What is the nature of a poem such that it stills, for the moment, such questioning? A science that fails to address such questions may indeed be a science, but it will not be profoundly of man.

For those initial questions I offer a substitute: What is it that makes human life problematic? Yes, life is often difficult and painful. But that in itself is not problematic. What makes life problematic? I’d suggest things like knowledge of death, the contradictory demands and challenges of a complex nervous system – that would, I suspect, include knowledge of death, and perhaps everything else as well. That is, that must be understood as a problem arising within the mechanisms of the brain. That’s a challenge for the behavioral sciences. And I think it’s approachable. But that’s a discussion for a different time and place.

The ethical critic is concerned with how one lives through those challenges. That is to say, how does a given text get from here to there? The ethnical critic isn’t trying to observe the text “from above” (transcendentally) but is registering how it feels to live in the text.

Wayne Booth speaks to that in The Company We Keep: An Ethics of Fiction (1988). After quoting from a Chekov story (“Home”) he observes (p. 484):

We all have “this foolish habit,” [liking stories] and we all are by nature caught in the ambiguities that trouble the prosecutor. Yet we are all equipped, by a nature (a “second nature”) that has created us out of story, with a rich experience in choosing which life stories, fictional or “real,” we will embrace wholeheartedly. Who we are, who we will be tomorrow depends thus on some act of criticism, whether by ourselves or by those who determine what stories will come our way – criticism wise or foolish, deliberate or spontaneous, conscious or unconscious: “You may enter; you must go away – and I will do my best to forget you.”Each culture provides every member with an unlimited number of “natural” choices that seem to require no thought.

“But how” Booth asks (p. 484), “should we make those choices?” We take advantage of the fact that fiction is a “relatively cost-free offer of trial runs” – the psychologist Keith Oatley talks of simulation, literary experience simulates life [2]. Booth observes:

If you try out a given mode of life in itself, you may, like Eve in the garden, discover too late that the one who offered it to you was Old Nick himself…. In a month of reading, I can try out more “lives” than I can test in a lifetime.Ethical criticism aims to help us explore the implications of the simulations we read, or see in films, or even enact in video games.

More later.

[1] From Canon/Archive to a REAL REVOLUTION in literary studies, Working Paper, December 21, 2017, 26 pp., https://www.academia.edu/35486902/From_Canon_Archive_to_a_REAL_REVOLUTION_in_literary_studies

[2] Articulate Vision: A Structuralist Reading of "Kubla Khan", Language and Style, Vol. 8: 3-29, 1985., https://www.academia.edu/8155602/Articulate_Vision_A_Structuralist_Reading_of_Kubla_Khan_

Friday, December 22, 2017

"Love" from Burning Man

‘Love’ by A.MilovSculpture for the Burning Man Festival #streetart pic.twitter.com/AiZ1ETyKkh

— Street Art (@GoogleStreetArt) December 22, 2017

Textual closure and literary form

The process whereby word forms, whether spoken, written, or gestured (signed), are linked to meaning/semantics is irreducibly computational.

A complete text is well-formed if and only if its meaning is resolved once the last word form has been taken up.

It is in this context that Roman Jakobson’s poetic function may be considered a principle of literary form.

* * * * *

See Jakobson’s Poetic Function and Literary Form, New Savanna, blog post, September 7, 2017: https://new-savanna.blogspot.com/2017/09/jakobsons-poetic-function-and-literary.html

Jakobson’s poetic function as a computational principle, on the trail of the human mind, New Savanna, blog post, September 19, 2017: https://new-savanna.blogspot.com/2017/09/jakobsons-poetic-function-as.html

Thursday, December 21, 2017

Enjoyment in music

PERSPECTIVE ARTICLE

Front. Psychol., 13 December 2017 | https://doi.org/10.3389/fpsyg.2017.02187

A Neurodynamic Perspective on Musical Enjoyment: The Role of Emotional Granularity

Nathaniel F. Barrett and Jay Schulkin

Introduction

Musical enjoyment is a nearly universal experience, and yet from a neurocognitive and evolutionary standpoint it presents a conundrum. Why do we respond so powerfully to something apparently without any survival value? A variety of explanations for the evolution of music cognition have been offered (e.g., Wallin et al., 2000; Morley, 2013), nevertheless most current neurocognitive theories of its specifically affective aspects do not posit any specially adapted emotional circuitry (but see Peretz, 2006). Rather, it is assumed that whatever processes are responsible for pleasure and emotion in general—be they subcortical, cortical, or both—are also responsible for the thrills of music. Accordingly, the problem of musical enjoyment is to explain how and why these processes are engaged so effectively by musical stimuli.

While this is a perfectly sensible approach, a major obstacle lies in its path: the paradox of enjoyable sadness in music (Davies, 1994; Levinson, 1997; Garrido and Schubert, 2011; Huron, 2011; Vuoskoski et al., 2011; Kawakami et al., 2013; Taruffi and Koelsch, 2014; Sachs et al., 2015). Music frequently elicits experiences of negative emotions, especially sadness, which we nevertheless find deeply gratifying. For neurocognitive perspectives, the implication of this phenomenon is that normal processes for generating emotional responses are not sufficient to explain musical enjoyment, as something special to music must allow us to enjoy negative emotion. How is musically induced sadness different from “normal” sadness such that the former can be enjoyed while the latter cannot?

To make progress on such questions, in this article we propose a theory of musical enjoyment based on implications of a neurodynamic approach to emotion (Pessoa, 2008; Flaig and Large, 2014), which highlights the role of transient patterns of coordinated neural activity spanning multiple regions of the brain. The key advantage of this perspective is its ability to register the possibility that emotional experiences differ not only in kind (happy vs. sad) but also in “granularity,” complexity, or differentiation (Lindquist and Barrett, 2008). Studies indicate that increased emotional granularity functions as a kind of positivity that can meliorate experiences of negative emotions (Smidt and Sudak, 2015). Accordingly, perhaps we can account for enjoyment of negative emotions in music if we can show that these emotions are more finely differentiated than “normal” negative feelings.

Based on this approach, we also propose a general distinction between pleasure, defined here as bursts of positively categorized feeling, and enjoyment, defined as sustained flows of finely differentiated feeling regardless of emotional categorization (cf. Frederickson, 2002). For this perspective, the categorical meaning of an emotional experience (e.g., happy or sad) is closely related to but separable from its positive or negative affective tone, at least insofar as this tone is influenced by granularity.

Neurodynamics, Emotion, and Music

Here “neurodynamic approach” refers to a family of neurocognitive theories that regard transient, large-scale patterns of rhythmically coordinated neural activity as the main vehicles of cognition/emotion (e.g., Freeman, 1997; Bressler and Kelso, 2001, 2016; Varela et al., 2001; Cosmelli et al., 2007; Breakspear and McIntosh, 2011; Sporns, 2011). An especially pertinent feature of this approach is its divergence from traditional notions of functional specialization and localization (Pessoa, 2008, 2014). Broadly speaking, insofar as neurodynamic theories understand cognitive functions as supported by transient, task-specific coalitions rather than stable processing pathways, they tend to affirm the multifunctionality of neural structures at multiple scales (Anderson et al., 2013; see also Hagoort, 2014; Friederici and Singer, 2015). According to this view, which contrasts with the modular approach commonly adopted by computational theories of neural function, the precise functional role of any given neural structure changes according to the context of coordinated neural activity (McIntosh, 2000, 2004; Bressler and McIntosh, 2007). On the other hand, this approach does not herald a return of “equipotentiality,” as it allows for characterizations of the functional “dispositions” of neural structures (Anderson, 2014).

Similarly, with regard to affect and emotion, the neurodynamic perspective can register the importance of distinct structures—e.g., subcortical structures and hedonic “hotspots” (Berridge and Kringelbach, 2015). But it holds that the full range of emotional experience must be understood in terms of continually evolving patterns of globally coordinated neural activity. Thus, while localized structures may have consistent roles in the production of emotional responses, they do not by themselves constitute emotion nor can they be said to govern emotion in any simple way (Flaig and Large, 2014). The point is not just that emotion is the product of the continual interplay of cortical and subcortical dynamics (Panksepp, 2012). Rather the key implication of neurodynamics is that this interplay is constituted by transient patterns of coordinated activity whose dynamic features—especially complexity and continuity—are relevant to the categorization of emotion and affect (cf. Spivey, 2007 on dynamical categorization). Here, we are mainly interested in the possibility that neurodynamic categorizations of emotional content can range in complexity, corresponding to differences of emotional granularity in experience (analogous to the difference between simple and rich color palettes).

It should be noted that this approach encompasses both categorical theories of “basic emotions” (e.g., Panksepp, 1998, 2007) and dimensional theories of “core affect” (Russell, 2003; Barrett et al., 2007). What is essential for present purposes is the way in which the dynamic interplay between subcortical responses and the complex cortical elaboration of emotion (Reybrouck and Eerola, 2017) gives rise to both categorical distinctions (happy vs. sad) and differences of granularity (fine vs. coarse).

Neurodynamic approaches are well-established in the field of music cognition (for reviews see Large, 2010; Flaig and Large, 2014). Among the advantages of a neurodynamic approach is its capacity to register the relationship between bodily movement and music (e.g., Large et al., 2015). This relationship has been indicated by numerous studies of sensorimotor involvement in music perception (e.g., Chen et al., 2008) and must be taken into account by any theory of musical experience, as we briefly indicate below.

However, few attempts have been made to understand musical enjoyment from a neurodynamic perspective (see Chapin et al., 2010; Flaig and Large, 2014). A notable exception is William Benzon's groundbreaking treatise (Benzon, 2001), which anticipates the perspective offered here. One reason for this neglect is the challenge of empirical verification: like neurodynamic theories of consciousness (Seth et al., 2006), neurodynamic theories of musical experience are in need of high temporal-resolution data (e.g., from EEG or MEG) that show how relevant characteristics of neural dynamics change during musical experience (see Garrett et al., 2013). For the current proposal, the key challenge is to find and measure just those variations that correspond to differences of emotional granularity or complexity. Until such methods are developed, studies of emotional differentiation in musical experience must turn to the refinement of self-reporting methods (Juslin and Sloboda, 2010).

more .....

Happy Winter Solstice! from Nina Paley

Happy Winter Solstice! pic.twitter.com/ETXkHTMveJ— Nina Paley (@ninapaley) December 21, 2017

From Canon/Archive to a REAL REVOLUTION in literary studies

Another working paper, title above, abstract a contents below.

Download at:

Abstract: Canon/Archive straddles the border between the standard interpretive literary criticism that has been in place since World War II and a new naturalist literary study in which literary texts and phenomena are treated as phenomena of the natural world, like language, without prejudice. This naturalist investigation takes the careful analytic description of texts, considered as strings of word forms, as its starting point. Canon/Archive exemplifies a so-called computational criticism in which computers are tools used for analyzing texts, often taken as a corpus of 10s, 100s, or 1000s of texts. Naturalist investigation also includes a computational approach in which computation is seen as the process linking word forms to semantic structures, expression to meaning. I examine two chapters from Canon/Archive, showing how that work can be supplemented by this other approach in which computation is a model for a mental process.

“Revolution” 2

The real revolution is in attending rigorously to the signifiers in the text 3

Computational Criticism in Context 3

Word and Text 6

Moretti and the Stanford Literary Lab: Computational criticism in two senses and the prospect of a new approach to literary studies 9

The Collaboratory 9

Topics and paragraphs 13

The direction of literary history 17

What are the institutional possibilities of a new criticism, a deeply computational one? 19

Appendix 1: Jakobson’s poetic function and the text taken as a string of word forms 22

Literary Form 22

The poetic function as a computational principle 23

Appendix 2: From distant reading to computational criticism: Canon/Archive 25

Wednesday, December 20, 2017

Four phrases coined by H. G. Wells that endure

Adam Roberts, in a post from September:

I'd say that there are four phrases in particular, out of all the many phrases and ideas Wells coined, that have enjoyed the most widespread and enduring afterlife: time machine, League of Nations, atom bomb and the war to end war.

Street Art: Made 514

New Street Art for the Draw the Line Festival in Campobasso Italy by the artist Made_514 pic.twitter.com/49hmJbUKsC— Street Art (@GoogleStreetArt) December 20, 2017

H. G. Wells and Harvey Weinstein as mama's boys, after a fashion

H. G. Wells and Harvey Weinstein? Two very different guys to be sure. Weinstein is much in the news these days as a sexual predator. He's a very successful movie producer, twice married. I've seen a bunch of his films, including Pulp Fiction and Kill Bill. I'm tempted to say that Wells is all but forgotten, but I don't know if that's true, though he's certainly not in the news. He was a prolific British writer of the late 19th and 20th century perhaps best known for his science fiction. I've read The Island of Doctor Moreau and The War of the Worlds, but not The Time Machine, though I may have thumbed through a copy. I recall seeing The Outline of History among my father's books, but I never read it. That is perhaps one of the books he'd inherited from his father.

Anyhow, my friend Adam Roberts is gearing up to write a literary biography of Wells and so has been reading though his works in chronological order and blogging about them, starting in February of this year. He's just reached The Book of Catherine Wells (1928).

Amy Catherine Robbins, who had become the second Mrs Wells in 1895 (and who had adopted the name ‘Jane’ at Wells's prompting, for reasons that remain opaque) died in October 1927. She had been diagnosed with an inoperable cancer in the spring of that year, and her health declined very rapidly. Wells returned from Europe to be with her and was present at her death.Wells decided to curate a memorial volume. Back in the mid 1890s Catherine had been his sometime collaborator, nosing out ideas for short articles and newspaper pieces and helping him realise them, and whilst the bulk of the resulting saleable pieces were written by Wells, she herself sometimes sketched out and wrote up these light-hearted pieces herself. Writing wasn't a career she developed any further than these few sketches, instead devoting herself to being Wells's homemaker and companion, turning a blind eye to his many infidelities and often (as with 1920's The Outline of History) acting as secretary, amanuensis and researcher.The Book of Catherine Wells is a collection of some of her earlier, mostly rather disposable journalistic essays from the 1890s, with a seven-page introduction by Wells himself (the intro was later collected in H G Wells in Love).

Though Wells does not appear to have been a sexual predator, he certainly was a womanizer, having bedded over 200 other women while married to Catherine. I have no idea about Weinstein's sexual activity other than the predation for which he has all of a sudden become something of a poster boy. Catherine knew about her husband's infidelity but nonetheless remained devoted to him, though it seems we don't really know what she thought about it.

Here's one of Adam's many interesting observations about all this:

But there's something diminishing, it seems to me, in the way it actually panned out in his life: not a sexual liberation so much as a kind of semi-licensed serial bigamy, in which Wells found the thrill of sexual novelty with a string of younger women whilst also positioning his wife as a kind of mother confessor, to whom he could always return and unburden himself. What's wrong with this picture? I don't know. I suppose it's the way it suggests the ways in which he wasn't walking the walk as well as talking the talk—the way, in other words, that his own psyche remained striated by a fundamentally Victorian sexual guilt that needed the absolution of a maternal, home-making, dependable woman to clear Wells's conscience, such that could once again range out into the world of sexual dalliance.

In response, I posted the following on his blog:

I can't help but read this material against the backdrop of the appalling sexual predation among the rich and powerful men that's been paraded across the front pages for the last month or so. Wells is certainly no Harvey Weinstein or Leon Wieseltier but still, we certainly are bedeviled by sexuality aren't we – by "we" I mean the race of naked apes.I can sort of understand penetrating rape, not condone it by any means, not THAT kind of understanding, but understand as something a horny bastard would do (and, yes, I know "it's about power"). What I don't get is these guys, for Weinstein isn't alone in this by any means, forcing women to watch them masturbate. What's up with that?It's like a three year-old finds himself with a "stiffy" and is both enormously pleased ("my aren't we standing proud!") and a bit terrified ("what if it won't go away!?"). So he does what any good three year old would do, he shows it to his mommy. Maybe she'll kiss it and make it go away, maybe not. But surely she'll do something!Catherine Wells as mother confessor seems in the same ballpark. To be sure, a different location in the park, but the same park nonetheless.

I'm tempted to quote Rodney King as this point, "Can we all get along?" Well there, I gave in to temptation, didn't I? It seems that Wells did get along. Weinstein not so much.

Monday, December 18, 2017

Monk says... Thelonious that is

Thelonious Monk’s 25 Tips for Musicians (1960) https://t.co/xWGToCzx3O pic.twitter.com/hDtNsTu7QS— Open Culture (@openculture) December 18, 2017

To Mars, because we can, really

Because right now, we think we can't, build for a future, that is, we're sticking our collective heads in the sand and saying "Oh, noes!"

|

| The Red Planet |

I don’t support going to Mars for practical reasons at all. I think we should plan to go to Mars because it would be a healthy sign that we, as a civilization, are still planning for a future — that we intend to live. Because right now, frankly, we’re not acting as though we do. We’re acting more the way a friend of mine did in the last year of his life: letting the mail pile up unopened, heaping garbage in the house, littering the floor with detritus, no longer bothering to turn over the calendar pages. He’d clearly decided, on some level, to die.We’re mostly hiding from the horrifying facts mounting ever more unavoidably around us, keeping ourselves zonked out on anything from Xanax to Oxy, immersed in the worlds of Warcraft or Westeros while the actual world is burning. Billionaires are building sumptuous bunkers instead of doing anything that would forestall the deluge or revolution they’re barricading against. Mounting a mission to Mars would be bold and hopeful, a gesture of faith, like planning a vacation to Bali next year when you’re battling cancer.Ray Bradbury, author of another famous Mars book, called space travel our modern version of cathedral building: a vast, ambitious, multigenerational undertaking, a shared vision to work toward together as a culture. Right now, we don’t have such a vision, or even a culture, for that matter — just a degrading, every-man-for-himself scramble for the last scraps of cash the 1 percent have overlooked, like one of those parking-lot contests where you have to keep your hand on a truck until everyone faints from exhaustion. We could use a worthier project.

Sunday, December 17, 2017

Mind or Machine?

I have, in various posts, expressed skepticism about the long-standing conversation about whether or not the mind is computational. I pay attention to the debate because I have a professional obligation to know something about it. But those discussions don’t tell me anything I can use in my intellectual work, whether it’s analyzing literary texts and movies, or thinking more generally about mind and culture. Those discussions rarely engage the with ideas and observations that one uses is more “practical” – as if analyzing “Kubla Khan” or King Kong were practical! – intellectual work.

It seems to me that those discussions are fundamentally metaphysical – well, duh! it’s philosophy of mind, no? – but likely ideological and even theological as well. We’ve got thinkers butting heads over fundamental assumptions, but not actually trying to figure anything out. In a way, machine is a proxy for man and all his works while mind is a proxy for God and mystery, or perhaps crass commerce vs. aristocratic noblesse oblige. Maybe both.

It’s not a problem that’s going to be solved. Perhaps, though, it will simply disappear. Spontaneous combustion.

I hope so.

Saturday, December 16, 2017

Emotional intelligence for artificial beings? With a potential application to Shakespeare

2AI Labs, Tim Barber and Mark Changizi, has announced a model of emotion: VICTOR Socio-Emotional AI.

We at 2ai Labs have spent the last decade deciphering the hidden language of emotions, and have developed a unifying theory of emotions, of their meaning and the machinery underlying emotional reasoning. The theory emanates from a first-principles approach to the fundamental issues pre-linguistic animals face in communicating so as to settle disagreements. It has two key pillars.I. Comprehension of emotions: The technology understands the specific meanings of emotional signals, including (a) what I want, (b) how compromising I feel I am being, (c) my hand strength in a situation, (d) my opinion about my opponent’s hand strength, and (e) my confirmation receipt of my opponent’s signals.II. Emotional reasoning machinery: The technology consists of an “emotion engine,” and allows the construction of AI personalities to suit one’s needs. The machinery allows personalities to vary along socio-emotionally sensible dimensions, and so one can, for example, vary their level of aggression, willingness to compromise, and degree of hospitality. Social intelligence also requires understanding social currency, or cool, and that a person’s emotions, and an AI’s emotional response, are bets; this is part of VICTOR’s machinery.

This lab note is accompanied by some interesting emoji-type diagrams, which you can see in this tweet from Changizi:

Making varieties of noises under my breath here at the breakfast bar. in an attempt to derive / intuit the space of vocalizations corresponding to the 4D space of emotions (below) emanating from our 2ai first-principles theory on the origins of socio-emotional intelligence. pic.twitter.com/damHDYZcfX— Mark Changizi (@MarkChangizi) December 1, 2017

Color me intrigued. I know little about Tim Barber beyond the snippets available in his CV and the fact that he's smart enough to team up with Changizi. I regard Changizi as one of the best psychological theorists we have, and was happy to blurb his book, Harnessed: How Language and Music Mimicked Nature and Transformed Ape to Man (Benbella, 2011).

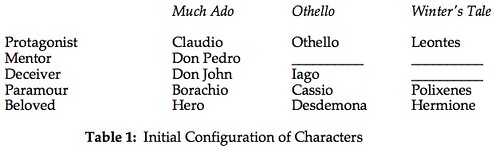

I'm wondering what their theory would tell me about Shakespeare, in particular, what it would tell me about Much Ado About Nothing, a comedy, Othello, a tragedy, and The Winter's Tale, a romance. With these plays Shakespeare has, in effect, presented us with something of an experiment. We have something we can call a Drama Engine. This Drama Engine has a variety of knobs and sliders we can use to set values for the parameters which will determine the matrix, the donnée, for our little play. One of these knobs operates at a very high level, simultaneously determining various aspects of the nascent drama. It is the position of this knob that seems to determine whether the play will be a comedy, a tragedy, or a romance. For the sake of argument let us call it G, for genre.

In each of these plays a man wrongly suspects his beloved of betraying him with another man. The following table shows some of the effects of G as they "trickle down" to the initial situation for each of our plays:

Along the left I've listed five dramatic functions which some character must play. In the columns for each play I've indicated the character that takes these functions. The point of the diagram as the we move from one genre to the next (in this order) one function seems to disappear. Othello has no mentor, but he has a deceiver; and Leontes has neither a mentor nor a deceiver. What I think is going on is that functions are, in effect, being absorbed into the protagonist. At the play's opening, Othello is senior enough in the world that he has no need of a mentor. Leontes is king in his world, so there is no one higher. As for being deceived about his wife, he does that to himself, no external agent required.

There's more. In the comedy the deception happens between betrothal and the marriage ceremony. In the tragedy the deception happens between the marriage ceremony (which apparently has been a secret one) and the consummation of the marriage. In the romance the marriage has been produced a six year old boy and, at the beginning of the play, the wife is pregnant with another child – who turns out to be a girl. There are others things in this complex as well, but this is enough to give you a feel for what's going on.

But how does our Drama Engine work? What parameters does it have, and how is G related to the others? I set out to answer that question some years ago and got no more than part way there: At the Edge of the Modern, or Why is Prospero Shakespeare's Greatest Creation? Part way is better than no way, and at least the issue is on the table. But it's unsatisfying.

Can VICTOR take us further along the path, perhaps even to its end? I note that "other awareness" is at the center of the model and our protagonists are deficient in the obverse of other awareness, self awareness.

Something else. The first chapter, "Quantitative Formalism", of Moretti et al., Canon/Archive: Studies in Quantitative Formalism, suggests that Shakespeare's genre space varies on two dimensions, not the one I've indicated in that table. I'd wanted to say a few words about that in my already too long review, but I ran out of time.

However, I do have an idea of where to look for that other dimension. Both the comedy and the romance, but not the tragedy, have two plots. The two plots run simultaneously in the comedy and successively in the tragedy. The Claudio/Hero plot in Much Ado About Nothing runs in parallel to the Beatrice/Benedick plot. The Beatrice/Benedick plot is very different in character from the Claudio/Hero plot, and that difference derives from the characters themselves, their temperaments. But I believe that the same psycho-socio dynamic drives both plots. In The Winter's Tale the tragic Leontes/Hermione plot is followed by the comic Perdita/Florizel plot, and that comedy redeems the apparent tragedy, transforming it into a romance.

However, I do have an idea of where to look for that other dimension. Both the comedy and the romance, but not the tragedy, have two plots. The two plots run simultaneously in the comedy and successively in the tragedy. The Claudio/Hero plot in Much Ado About Nothing runs in parallel to the Beatrice/Benedick plot. The Beatrice/Benedick plot is very different in character from the Claudio/Hero plot, and that difference derives from the characters themselves, their temperaments. But I believe that the same psycho-socio dynamic drives both plots. In The Winter's Tale the tragic Leontes/Hermione plot is followed by the comic Perdita/Florizel plot, and that comedy redeems the apparent tragedy, transforming it into a romance.

VICTOR, it's your move.

Friday, December 15, 2017

Thursday, December 14, 2017

Group identity

From an essay, The age of white guilt: and the disappearance of the black individual (Harper's, November 1999), by Shelby Steele:

The greatest problem in coming from an oppressed group is the power the oppressor has over your group. The second greatest problem is the power your group has over you. Group identity in oppressed groups is always very strategic, always a calculation of advantage. The humble black identity of the Booker T. Washington era–“a little education spoiled many a good plow hand”–allowed blacks to function as tradesmen, laborers, and farmers during the rise of Jim Crow, when hundreds of blacks were being lynched yearly. Likewise, the black militancy of the late sixties strategically aimed for advantage in an America suddenly contrite over its long indulgence in racism.H/t Nina Paley.

One’s group identity is always a mask–a mask replete with a politics. When a teenager in East Los Angeles says he is Hispanic, he is thinking of himself within a group strategy pitched at larger America. His identity is related far more to America than to Mexico or Guatemala, where he would not often think of himself as Hispanic. In fact, “Hispanic” is much more a political concept than a cultural one, and its first purpose is to win power within the fray of American identity politics. So this teenager must wear the mask that serves his group’s ambitions in these politics.

Identity in this sense is differential, not intrinsic.

Structuralists behaving badly at Johns Hopkins

The Quarterly Conversation has just published an essay excerpted from Cynthia L. Haven, Evolution of Desire: A Life of René Girard (Michigan St. U. Press 2018). Here's an passage from the excerpt about the response to Lacan's mysterious send-up of Freud, “Of structure as an inmixing of an otherness prerequisite to any subject whatsoever.”:

[Angus] Fletcher called him out in his first comment: “Freud was really a very simple man,” he explained. “He didn’t try to float on the surface of words. What you’re doing is like a spider: you’re making a very delicate web without any human reality in it . . . All this metaphysics is not necessary. The diagram was very interesting, but it doesn’t seem to have any connection with the reality of our actions, with eating, sexual intercourse, and so on.” At least, those are the heavily edited words from The Structuralist Controversy, which don’t capture the hysteria and pandemonium (Donato’s careful hand would rework this section).At the event itself, rather than Donato’s diplomatic recreation of it, Fletcher’s voice had taken on an accusatory tone—“Vous, vous monsieur . . .” He attacked in a British-inflected French, while Lacan insisted on replying in his inadequate English. “Lacan was enjoying every bit of this. He was like a Cheshire cat,” said Macksey. “Angus just went ballistic.”“I should have been aware, and wasn’t, the state that Angus was in. Angus is a very bright guy.” Elsewhere in the room, Girard was “trying to climb under the chair, it was so embarrassing,” he said. “René felt we owed something to the Ford Foundation, from whom all blessings flow . . . I would watch him. He was the senior member of the troika—at moments, he seemed to be thinking that the wheels had come off and we were rolling downhill.” Girard’s concern regarding the Ford Foundation was understandable, since “Lacan particularly set Peter [Caw]’s teeth on edge”—perhaps from the moment of the big bear hug.Macksey felt that the microphone had to be kept from Wilden at all costs. Someone passed the mic to Wilden nevertheless. “At that point Tony lit into Lacan, saying this was your great opportunity, this was your first exposure in the United States. All you had to do was just talk your language and you don’t know diddly squat about the English language.” Goldmann jumped into the melée, attacking Lacan on procedural grounds as well.The Structuralist Controversy gives little indication of this discord—remarks were toned down later to reduce the decibel level, and much of the action was between the lines, anyway. According to Macksey, “It got to be about midnight and things were just going on wildly and Rosolato says, ‘Oh, he always does this to me. He schedules me to talk right after him and then there’s no time.’ So Nicolas Ruwet, who is a Belgian linguist, read a paper that I thought was more exact and applied overt structuralism more than most of the people who were participating had done. But nobody paid attention to that paper. Alas.”Back at the Belvedere, Lacan started calling everyone in Paris—Lévi-Strauss and Malraux among them—giving his version of the events. Eventually, he would run up a $900 phone bill from the hotel. “People in Paris thought a small revolution had occurred,” said Macksey. But the revolution would take place the next day.

The last day of the symposium, when Derrida delivered the paper that "deconstructed" structuralism.

H/t 3QD.

Should I refuse to watch a movie because Harvey Weinstein was involved?

Alas, I'm SOL because I've seen many films he's produced. But going forward?

The New York Times has a short story about a publicaly searchable database, Rotten Apples, that "informs users which films or television shows are connected to those accused of sexual harassment or worse." You enter the name of a movie or show and it returns a list of people associated with the project who have allegations against them. "Each result is linked to a news article about the accusations." If there are no allegations, you'll be told that. So, I searched on "Kill Bill" and "Pulp Fiction" (both of which I've seen) and sure enough, Weinstein's name popped up.

The tool was created by one Hal Wagman and three others.

The tool is purely informational and is not intended to condemn entire projects, said Mr. Wagman, who likened it to Rotten Tomatoes and IMDB.“We’re definitely not advocating for boycotting anyone’s films,” he said. The team instead wants the tool to help people make “ethical media consumption decisions.”Bekah Nutt, a user-experience designer at Zambezi and a team member, hopes that the tool can shed light on how pervasive the problem of sexual misconduct is.“It became interesting to think about the wide-reaching careers of those facing allegations,” she said. “Every article would spotlight the big projects everyone knows about.” This tool, she said, allows users to see “the full-range of their careers.”

What's an ethical media consumption decision?

Salma Hayak just published an article in the NYTimes about the hell Weinstein put her though to make Frida, about the life of Frida Kahlo. I've never seen the film, but it is the kind of film that interests me. Should I refuse to watch it because Weinstein brokered the deal that make the film possible? What of the work Hayak put into the film despite horrific harassment from Weinstein, and the director, Julie Taymor, and all the others involved with the project? Should I refuse to see their work because of its unfortunate association with Weinstein?

Peer review is known to be ineffective in the sciences

So why is the practice continued? Writing in Times Higher Education, Les Hatton and Gregory Warr identify various problems and then observe:

We are not the first to identify these problems, so we might ask why peer review retains its essentially unassailable status. We suggest a two-fold answer rooted more in socio-economic factors than the dispassionate review of scientific research.First, peer review is self-evidently useful in protecting established paradigms and disadvantaging challenges to entrenched scientific authority. Second, peer review, by controlling access to publication in the most prestigious journals helps to maintain the clearly recognised hierarchies of journals, of researchers, and of universities and research institutes. Peer reviewers should be experts in their field and will therefore have allegiances to leaders in their field and to their shared scientific consensus; conversely, there will be a natural hostility to challenges to the consensus, and peer reviewers have substantial power of influence (extending virtually to censorship) over publication in elite (and even not-so-elite) journals.

Yep, sounds like the humanities (see my post Rejected @NLH! Part 4: Déjà vu all over again at New Literary History + Welcome to the club, Franco! [#DH #Canon/Archive]).

Wednesday, December 13, 2017

Teaching World Literature

More to the point, world literature is a phenomenon of the southern United States. The 11 southern states contained only 14 percent of the nation’s population, but they accounted for half of our adopters. True, there were world literature courses that didn’t use any anthology, while others might assign one of our rivals and therefore wouldn’t show up in our statistics. But despite these caveats (Norton has over 80 percent market share), it was clear that world literature was thriving in the South, unsettling any easy generalization about red states and blue.

The popularity of world literature in the South was so surprising to me -- and to pretty much everyone I have talked to -- that I decided to visit some of our adopters. When I asked them why their institutions were so invested in world literature, they explained that while many coastal elite universities had given up on Great Books courses during the canon wars, the more conservative southern colleges had held onto them. But gradually those institutions transformed what originally would have been Western literature courses into world literature courses. (This account dovetailed with another result from the surveys: a separate anthology of Western literature was losing adopters, and we have since decided to phase it out).

More than fiction:

H/t Jonathan Goodwin:The interests of students in the South also dovetail with another feature of world literature: the importance of religious texts. Our current understanding of literature as fiction is recent. Anthologies of world literature, which cover 4,000 years, use a much wider definition -- namely, significant writing, including religious, philosophical and political texts. The Buddha and Socrates are as important as Virgil or Shakespeare.

Professors in Alabama are often lady huntresses, but they teach world literature. An unexpected surprise. A scholar changes his views: https://t.co/dAhrvAD0Zr— Jonathan Goodwin (@joncgoodwin) December 13, 2017

The problem of state capacity in India

Lant Pritchett famously labelled India a flailing state—one where “the head, that is the elite institutions at the national (and in some states) level remain sound and functional but that this head is no longer reliably connected via nerves and sinews to its own limbs.”Pritchett’s diagnosis of the Indian malady has been interpreted by many scholars as a problem of institutional manpower and institutional design. There is a new revival of discussions on state capacity to execute plans, and a new focus on redesigning and staffing public institutions. [...] This problem of state capacity has an element of truth and urgency. Almost all of India’s governance problems can find links to the lack of manpower in state services. [...]

This crisis in state capacity cannot be solved anytime soon. Though India’s population, especially the youth, should be in line for these jobs, there are two major problems. First is the old problem of state budgets. India has a very small tax base, with a minuscule fraction of its citizens paying income tax. There needs to be a reduction in government spending in other areas and an increase in revenue to support the much needed manpower. Second, the Indian workforce is not skilled enough to be recruited for these jobs.

However, perhaps the state can be trimmed back:

An alternative interpretation of Pritchett’s famous diagnosis is that with flailing limbs, perhaps the head can issue fewer commands, and engage in fewer actions. Essentially, both streamlining and shrinking the ambit of the regulatory state to a size that can actually be effectively enforced. The size of the Indian state in terms of its manpower may be small, but its size in terms of regulation is gigantic, and most of this regulation is either unenforced, or selectively and perniciously enforced.

Sometimes seeing is hearing

Heather Murphy in the NYTimes:

This week, in an improbable turn of events, the sound of silence went viral.An animated GIF showing an electrical tower jumping rope over delightfully bendy power lines began to spread. The frenzy started when Lisa Debruine, a researcher at the Institute of Neuroscience and Psychology at the University of Glasgow, posed this question:

Does anyone in visual perception know why you can hear this gif? pic.twitter.com/mcT22Lzfkp— 𝙻𝚒𝚜𝚊 𝙳𝚎𝙱𝚛𝚞𝚒𝚗𝚎 🏳️🌈 (@lisadebruine) December 2, 2017

When she asked Twitter users in an unscientific survey whether they could hear the image — which actually lacks sound, like most animated GIFs — nearly 70 percent who responded said they could.Once you “heard” it, it was hard not to start noticing that other GIFs also seemed to be making noise — as if the bouncing pylon had somehow jacked up the volume on a cacophonous orchestra few had noticed before.

It turns out that this phenomenon has ha name, visual-evoked auditory response or visual EAR, and has been under investigation:

The ability to “vEAR” is not limited to scenes where one would expect to hear a noise, they say. One lab study found that more than 20 percent of people could hear flashing lights in silent videos. A range of motions, abstract patterns and even colors evoke sound for some.The act of hearing a visual highlights the trippy fact that our senses do not operate the way we often assume, with crisp boundaries between them. Smelling, hearing and tasting all “speak to each other and influence each other, so little things like the color of the plate you’re eating on can influence how food tastes,” said Mr. Fassnidge.

We know, in general, that our senses are constantly involved in predicting or "guestimating" what's next. In the case of vEARing the guestimation crosses sensory borders so that we hear where there is, in fact, no sound, but there should/could be.

Using electrical brain stimulation, we have also found tentative signs that visual and auditory brain areas cooperate more in people with vEAR, while they tend to compete with each other, in non-vEAR people,” Dr. Freeman said in an email. “So people who claim to hear visual motion have brains that seem to work slightly differently.”

Individuals with frequent or advanced vEARing may have a form of “synesthesia,” a neurological phenomenon in which one sense feeds into another, he said. In other types of synesthesia, sounds might be linked to colors or words with tastes.

Tuesday, December 12, 2017

From distant reading to computational criticism: Canon/Archive @ 3QD [#DH]

As far as I can tell literary studies will remain committed to pre-computational intellectual formations for the foreseeable future and will do so from a position of quasi-aristocratic superiority over crass calculation, of which it will remain fitfully ignorant.

One might say that my participation in the academic blogosphere is framed, for the moment, by engagement with the work of Franco Moretti. It began with a so-called book event at The Valve, a now dormant group blog I joined late in 2005. Jonathan Goodwin had organized an online symposium about Moretti’s Graphs, Maps, Trees. It went live on the web on January 2, 2006 and had contributions by 15 people. Eight of us were Valve contributors: John Holbo, Ray Davis, Matt Greenfield, Amardeep Singh, Adam Roberts, Bill Benzon, Jonathan Goodwin, and Sean McCann. There were guest appearances by seven: Franco Moretti, Matthew Kirschenbaum, Timothy Burke, Eric Hayot, Steven Berlin Johnson, Jenny Davidson, and Cosma Shalizi.

I have no idea how many people contributed comments to those discussions, which unfolded over a period of three weeks. Most, but not all of these authors held academic posts (I did not). Two, I believe, did not have doctorates. The contributors came from all over the place and I wouldn’t hazard a guess about their backgrounds. Some were academics, I’m sure, and some where not. The symposium eventually became (self-published) book: Jonathan Goodwin and John Holbo, eds. <i>Reading Graphs, Maps, and Trees: Responses to Franco Moretti (Glassbead Books 2009).

That was over a decade ago. It was a collective event that took place outside the ordinary confines of the academic world – my use of confines is, of course, quite deliberate.

At the time Moretti was talking about distant reading. He had not yet become involved with computing and, necessarily, with people who know how to program then. He formed the Stanford Literary Lab in 2010 with Matthew Jockers, who had computer skills that he did not. The Lab issued its first pamphlet in, I believe, January of 2011: Quantitative Formalism: an Experiment. It was signed by Moretti and four others: Sarah Allison, Ryan Heuser, Matthew Jockers, and Michael Witmore. The most recent pamphlet, no. 16, was issued in November of this year: Totentanz. Operationalizing Aby Warburg’s Pathosformeln, by Leonardo Impett and Franco Moretti.

That first pamphlet had been submitted to a prestigious journal but was turned down in terms that suggested that the intellectual method itself was being rejected, not just that particular argument. And so the group decided to sidestep the world of formal academic publication and publish its work in the form of stand-alone pamphlets. This is common enough in technical disciplines, where work will be published in the form of technical reports – though that work will sometimes/often then by published in journal form as well; but it was unheard of in the humanities.

My point, then, is that the literary lab has worked at the edge of the academic world. It is located at a prestigious university, and its pamphlets bear the imprimatur of that university. But they do not exist in the world of formal academic publication.

Moretti, who is now retired from Stanford, and the lab have now gathered eleven of those pamphlets into a book:

Canon/Archive: Studies in Quantitative Formalism by Franco Moretti (Author, Editor), Mark Algee-Hewitt, Sarah Allison, Marissa Gemma, Ryan Heuser, Matthew Jockers, Holst Katsma, Long Le-Khac, Dominique Pestre, Erik Steiner, Amir Tevel, Hannah Walser, Michael Witmore, and Irena Yamboliev, published by n+1.

Note the term, quantitative formalism. Moretti now refers to this approach as computational criticism rather than distant reading, though some in the field use the latter term.

I have written an essay/review about the book and published it in 3 Quarks Daily, December 11, 2017, which is not an academic journal: Moretti and the Stanford Literary Lab: Computational criticism in two senses and the prospect of a new approach to literary studies.

The epigraph to this post is the final sentence of that essay/review.

Will the future of literary studies unfold outside departments of literature and their associated societies and journals?

More later.

"To the moon", Trump said, "and then Mars"

I don't quite know what I think of this (NYTimes):

WASHINGTON — At a time when China is working on an ambitious lunar program, President Donald Trump vowed on Monday that the United States will remain the leader in space exploration as he began a process to return Americans to the moon.

"We are the leader and we're going to stay the leader, and we're going to increase it many fold," Trump said in signing "Space Policy Directive 1" that establishes a foundation for a mission to the moon with an eye on going to Mars.

"This time, we will not only plant our flag and leave our footprint, we will establish a foundation for an eventual mission to Mars," Trump said. "And perhaps, someday, to many worlds beyond."

Back in June, China's space official said the country was making “preliminary” preparations to send a man to the moon, the latest goal in China’s ambitious lunar exploration program.

Trump's signing ceremony for the directive included former lunar astronauts Buzz Aldrin and Harrison Schmitt and current astronaut Peggy Whitson, whose 665 days in orbit is more time in space than any other American and any other woman worldwide. [...]

"And space has so much to do with so many other applications, including a military application," he said without elaboration.

The militarization of space? No. Though obviously that's already started with spy satellites in earth orbit.

Space exploration is expensive, and Trump wants to give a big fat tax break to his wealthy buddies. No.

And space ought to belong to all humankind and not be the object of nationalist competition.

Still, tentatively, Yes.

See my post, A Child of the space Age.

Subscribe to:

Posts (Atom)