Tuesday, August 30, 2022

A note on AGI as concept and as shibboleth [kissing cousin to singularity]

Artificial intelligence was ambitious from its beginnings in the mid-1950s; this or that practitioner would confidently predict that before long computers could perform any mental activity that humans could. As a practical matter, however, AI systems tended to be narrowly focused. The field’s grander ambitions seemed ever in retreat. Finally, at long last, one of those grand ambitions was realized. In 1997 IBM’s Deep Blue beat world champion Gary Kasparov in chess.

But that was only chess. Humans remained ahead of computers in all other spheres and AI kept cranking out narrowly focused systems. Why? Because cognitive and perceptual competence turned out to require detailed procedures. The only way to accumulate the necessary density of detail was to focus on a narrow domain.

Meanwhile, in 1993 Verner Vinge delivered a paper, Technological Singularity, at a NASA Symposium and then published it in The Whole Earth Review.

Progress in hardware has followed an amazingly steady curve in the last few decades. Based on this trend, I believe that the creation of greater-than-human intelligence will occur during the next thirty years. (Charles Platt has pointed out that AI enthusiasts have been making claims like this for thirty years. Just so I'm not guilty of a relative-time ambiguity, let me be more specific: I'll be surprised if this event occurs before 2005 or after 2030.)

What are the consequences of this event? When greater-than-human intelligence drives progress, that progress will be much more rapid. In fact, there seems no reason why progress itself would not involve the creation of still more intelligent entities – on a still-shorter time scale. The best analogy I see is to the evolutionary past: Animals can adapt to –oblems and make inventions, but often no faster than natural selection can do its work – the world acts as its own simulator in the case of natural selection. We humans have the ability to internalize the world and conduct what-if’s in our heads; we can solve many problems thousands of times faster than natural selection could. Now, by creating the means to execute those simulations at much higher speeds, we are entering a regime as radically different from our human past as we humans are from the lower animals.

This change will be a throwing-away of all the human rules, perhaps in the blink of an eye – an exponential runaway beyond any hope of control. Developments that were thought might only happen in “a million years: (if ever) will likely happen in the next century.

That got people attention, at least in some tech-focused circles, and provided a new focal point for thinking about artificial intelligence and the future. It’s one thing to predict and hope for intelligent machines which will do this that and the other. But rewriting the nature of history, top to bottom, that’s something else again.

In the mid-2000s Ben Goertzel and others felt the need to rebrand AI in a way more suited to the grand possibilities that lay ahead. Goertzel noted:

In 2002 or so, Cassio Pennachin and I were editing a book on approaches to powerful AI, with broad capabilities at the human level and beyond, and we were struggling for a title. The provisional title was “Real AI” but I knew that was too controversial. So I emailed a bunch of friends asking for better suggestions. Shane Legg, an AI researcher who had worked for me previously, came up with Artificial General Intelligence. I didn’t love it tremendously but I fairly soon came to the conclusion it was better than any of the alternative suggestions. So Cassio and I used the term for the book title (the book “Artificial General Intelligence” was eventually published by Springer in 2005), and I began using it more broadly.

Goertzel realized term had its limitations. “Intelligence” is a vague idea and, whatever it means, “no real-world intelligence will ever be totally general.” Still

It seems to be catching on reasonably well, both in the scientific community and in the futurist media. Long live AGI!

My point, then, is that the term did not refer to a specific technology or set of mechanisms. It was an aspirational term, not a technical one.

And so it remains. As Jack Clark tweeted a few weeks ago:

Discussions about AGI tend to be pointless as no one has a precise definition of AGI, and most people have radically different definitions. In many ways, AGI feels more like a shibboleth used to understand if someone is in- or out-group wrt some issues.

— Jack Clark (@jackclarkSF) August 6, 2022

For the matter, “the Singularity” is a shibboleth as well. The two of them tend to travel together.

The impulse behind AGI is to rescue AI from its narrow concerns and focus our attention on grand possibilities for the future.

Cab Calloway and his band on the road [railroad]

Photographic print of Cab Calloway and his band in a sleeper car, 1933 #openaccess #museumarchive https://t.co/yzXR22JyPQ pic.twitter.com/B2dy5KkdW9

— SI: Museum of African American History (Bot) (@si_nmaahc) August 28, 2022

Audiences and critics are now disagreeing about blockbusters

Fans and critics agree more often than not. The average difference in their ratings is about 5 points.

— Lucas Shaw (@Lucas_Shaw) August 28, 2022

This year? It is 19. Here are the 10 highest-grossing movies of 2022.https://t.co/y5OwrrQm9C pic.twitter.com/2d41uk1JY4

FWIW there have been years where critics liked movies as much if not more than fans.

— Lucas Shaw (@Lucas_Shaw) August 28, 2022

In 2005, the 10 biggest movies got higher scores from critics than fans. pic.twitter.com/JVtLPUuf9x

Lots more in this week's newsletter, including methodology and the one year fans/critics were in near total agreement about blockbusters. Read it if you like movies, or just like arguing.

— Lucas Shaw (@Lucas_Shaw) August 28, 2022

And if you dig it, you can always subscribe -->https://t.co/aqvwunFRqC

Monday, August 29, 2022

My current thoughts on AI Doom as a cult(ish) phenomenon

I’ve been working on an article in which I argue that belief in and interest in the likelihood that future AI poses an existential risk to humanity – AI Doom – that this has become the focal point of cult behavior. I’m trying to figure out how to frame the argument. Which is to say, I’m trying to figure out what kind of an argument to make.

This belief has two aspects: 1) human level artificial intelligence, aka artificial general intelligence (AGI), is inevitable, and may well, very likely will, inevitably will lead to superintelligence, and 2) this intelligence will very likely turn on us, either deliberately or inadvertently. If both of those are true, then belief in AI Doom is rational. If neither are true, then belief in AI Doom is mistaken. Cult behavior, then, is undertaken to justify and support these mistaken beliefs.

That last sentence is the argument I want to make. I don’t want to actually argue that the underlying beliefs are mistaken. I wish to assume that. That is, I assume that on this issue our ability to predict the future is dominated by WE DON’T KNOW.

Is that a reasonable assumption? That is to say, I don’t believe that those ideas are a reasonable way to confront the challenges posed by AI. And I’m wondering what motivates such maladaptive beliefs.

On the face of it such cult behavior is an attempt to assert magical control over phenomena which are beyond our capacity to control. It is an admission of helplessness.

* * * * *

I drafted the previous paragraphs on Friday and Saturday (August 26th and 27th) and then dropped it because I didn’t quite know what I was up to. Now I think I’ve figured it out.

Whether or not AGI and AI Doom are reasonable expectations for the future, that’s one issue, and it has its own set of arguments. That a certain (somewhat diffuse) group of people have taken those ideas and created a cult around them, is a different issue. And that’s the argument I want to make. In particular, I’m not arguing that those people are engaging in cult behavior as a way of arguing against AGI/AI Doom. That is, I’m not trying to discredit believers in AGI/AI Doom as a way of discrediting AGI/AI Doom. I’m quite capable to arguing against AGI without saying anything at all about the people.

As far as I know, the term “artificial general intelligence” didn’t come into use until the mid-2000s, and focused concern about rogue AI begins consolidating in the subsequent decade, well after the first two Terminator films (1984, 1991). It got a substantial boost with the publication of Nick Bostrom’s Superintelligence: Paths, Dangers, Strategies in 2014, which introduced the (in)famous paperclip scenario, in which an AI tasked with creating as many paperclips as possible proceeds to cover the earth's surface into paperclips.

One can believe in AGI without being a member of a cult and one can fear future AI without being a member of a cult. Just when these beliefs became cultish, that’s not clear to me. Just when these beliefs became cultish, that’s not clear to me. What does that mean, became cultish? It means, or implies, that people adopt those beliefs in order to join the group. But how do we tell that that has happened? That’s tricky and I’m not sure.

* * * * *

I note, however, that the people I’m thinking about – you’ll find many of them hanging out at places like LessWrong, Astral Codex Ten, and OpenPhilanthropy – tend to treat the arrival of AGI as a natural phenomenon, like the weather, over which they have little control. Yes, they know that the technology is created by humans, many of them are their friends, they may themselves be actively involved in AI research, and, yes, they want to slow AI research and influence it in specific ways, but they nonetheless regard the emergence of AGI as inevitable. It’s ‘out there’ and is happening. And once it emerges, well, it’s likely to go rogue and threaten humanity.

The fact is the idea of AGI is vague, and, whatever it is, no one knows how to construct it. There’s substantial fear that it will emerge through scaling up of current machine learning technology. But no one really knows that or can explain how it would happen.

And that’s what strikes me as so strange about the phenomenon, the sense of helplessness. All they can do is make predictions about when AGI will emerge and sound the alarm to awaken the rest of us, but if that doesn’t work, we’re doomed.

How did this come about? Why do these people believe that? Given that so many of these people are actively involved in creating the technology – see this NYTimes article from 2018 in which Elon Musk sounds the alarm while Mark Zuckerberg dismisses his fears – one can read it as a narcissistic over-estimation of their intellectual prowess, but I’m not sure that covers it. Or perhaps I know what the words mean, but what the assertion means, I don’t understand it very well. I mean, if it’s narcissism, it’s not at all obvious to me that Zuckerberg is less narcissistic than Musk. To be sure, that’s just two people, but many in Silicon Valley share their views.

Of course, I don’t have to explain why in order to make that argument that we’re looking at cult behavior. That’s the argument I want to make. How to make it?

More later.

Sunday, August 28, 2022

Elemental Cognition is ready to deploy hybrid AI technology in practical systems

David Ferrucci is best-known as the researcher who led the team that developed IBM's Watson, which beat the best human players of Jeopardy in 2011. He left IBM a year later and formed his own company, Elemental Cognition, in 2015. Elemental cognition is taking a hybrid approach, combining aspects of machine learning and symbolic computation.

Elemental Cognition has recently developed a system that helps people plan and book round-the-world airline tickets:

The round-the-world ticket is a project for oneworld, an alliance of 13 airlines including American Airlines, British Airways, Qantas, Cathay Pacific and Japan Airlines. Its round-the-world tickets can have up to 16 different flights with stops of varying lengths over the course of a year.

Elemental Cognition supplies the technology behind a trip-planning intelligent agent on oneworld’s website. It was developed over the past year and introduced in April.

The user sees a global route map on the left and a chatbot dialogue begins on the right. A traveler starting from New York types in the desired locations — say, London, Rome and Tokyo. “OK,” replies the chatbot, “I have added London, Rome and Tokyo to the itinerary.”

Then, the customer wants to make changes — “add Paris before London,” and “replace Rome with Berlin.” That goes smoothly, too, before the system moves on to travel times and lengths of stays in each city.

Rob Gurney, chief executive of oneworld, is a former Qantas and British Airways executive familiar with the challenges of online travel planning and booking. Most chatbots are rigid systems that often repeat canned answers or make irrelevant suggestions, a frustrating “spiral of misery.”

Instead, Mr. Gurney said, the Elemental Cognition technology delivers a problem-solving dialogue on the fly. The rates of completing an itinerary online are three to four times higher than without the company’s software.

Elemental Cognition has developed an approach that all-but eliminates the hand-coding typcial of symbolic A.I.:

For example, the rules and options for a global airline ticket are spelled out in many pages of documents, which are scanned.

Dr. Ferrucci and his team use machine learning algorithms to convert them into suggested statements in a form a computer can interpret. Those statements can be facts, concepts, rules or relationships: Qantas is an airline, for example. When a person says “go to” a city, that means add a flight to that city. If a traveler adds four more destinations, that adds a certain amount to the cost of the ticket.

In training the round-the-world ticket assistant, an airline expert reviews the computer-generated statements, as a final check. The process eliminates most of the need for hand coding knowledge into a computer, a crippling handicap of the old expert systems.

There's more at the link.

* * * * *

Lex Fridman interviews David Ferrucci (2019).

0:00 - Introduction

1:06 - Biological vs computer systems

8:03 - What is intelligence?

31:49 - Knowledge frameworks

52:02 - IBM Watson winning Jeopardy

1:24:21 - Watson vs human difference in approach

1:27:52 - Q&A vs dialogue

1:35:22 - Humor

1:41:33 - Good test of intelligence

1:46:36 - AlphaZero, AlphaStar accomplishments

1:51:29 - Explainability, induction, deduction in medical diagnosis

1:59:34 - Grand challenges

2:04:03 - Consciousness

2:08:26 - Timeline for AGI

2:13:55 - Embodied AI

2:17:07 - Love and companionship

2:18:06 - Concerns about AI

2:21:56 - Discussion with AGI

Saturday, August 27, 2022

Jazz has been blamed for many things [Twitter]

Warts on the feet pic.twitter.com/OJj8SSHd49

— Paul Fairie (@paulisci) August 25, 2022

Bad teeth pic.twitter.com/I6jOuiWmTO

— Paul Fairie (@paulisci) August 25, 2022

There are more tweets in the stream. Here's the last one in the original thread, quoting Paul Whiteman, known as "The Kind of Jazz" in his time (the 1920s and 1930s).

And then there's these in the comments, among many others:Everything pic.twitter.com/Y7HOlU0LLr

— Paul Fairie (@paulisci) August 25, 2022

The Jazz Problem, August 1924 pic.twitter.com/4GD7ITtkD3

— Mark W. (@DurhamWASP) August 26, 2022

H/t Tyler Cowen.Don't forget the anti-Semitic white supremacist Henry Ford tried to ban jazz claiming it caused unsociability, sensuality, infantilism, & wasmoral poison (& claimed Jews were behind it). Citation: Emery Warnock, "The Anti-Semitic Origins of Henry Ford's Arts Education Patronage" pic.twitter.com/gfgNItHAo1

— Brock Bahler (he/him) 🌻 (@brockbahler) August 26, 2022

As a point of refence, see my post, Official Nazi Policy Regarding Jazz.

Friday, August 26, 2022

Sex among the Aka, hunter-gatherers of the Central African Republic [sex is work of the night]

2. The Aka report having sex about 3 times a night, with some days of rest between (all data are self-reported). Here, these frequencies are converted to weekly for comparison with neighboring Ngandu farmers and the US: pic.twitter.com/EVIprY0hkv

— Ed Hagen (@ed_hagen) August 26, 2022

3. The Aka and Ngandu both report that having sex is mainly to have children, which warms my sociobiological heart: pic.twitter.com/hwTAnO0jdZ

— Ed Hagen (@ed_hagen) August 26, 2022

There are seven more tweets in the stream.

What’s Stand-Up Like? [from the inside]

Thursday, August 25, 2022

PowerPoint Assistant: Augmenting End-User Software through Natural Language Interaction

This links to a set of notes that I first wrote up in 2003 or 2004, somewhere in there, but didn't post to the web until 2015. It calls for a natural language interface with end-user software that could be easily extended and modified through natural language. The abstract talks of hand-coding a basic language capability. I wrote these notes before machine learning had become so successful. These days hand-coding might be dispensed with – notice that I said "might." In any event, a young company called Adept has recently started up with something like that in mind:

True general intelligence requires models that can not only read and write, but act in a way that is helpful to users. That’s why we’re starting Adept: we’re training a neural network to use every software tool and API in the world, building on the vast amount of existing capabilities that people have already created.

In practice, we’re building a general system that helps people get things done in front of their computer: a universal collaborator for every knowledge worker. Think of it as an overlay within your computer that works hand-in-hand with you, using the same tools that you do.

* * * * *

Another working paper at Academia.edu: https://www.academia.edu/14329022/PowerPoint_Assistant_Augmenting_End_User_Software_through_Natural_Language_Interaction

Abstract, contents, and introduction below, as usual.

Abstract: This document sketches a natural language interface for end user software, such as PowerPoint. Such programs are basically worlds that exist entirely within a computer. Thus the interface is dealing with a world constructed with a finite number of primitive elements. You hand-code a basic language capability into the system, then give it the ability to ‘learn’ from its interactions with the user, and you have your basic PPA.

C O N T E N T S

Introduction: Powerpoint Assistant, 12 Years Later 1

Metagramming Revisited 3

PowerPoint Assistant 5

Plausibiity 5

PPA In Action 6

The User Community 12

Generalization: A new Paradigm for Computing 13

Appendix: Time Line Calibrated against Space Exploration 15

Introduction: Powerpoint Assistant, 12 Years Later

I drafted this document over a decade ago, after I’d been through an intense period in which I re-visited work I’d done in the mid-to-late 1970s and early 1980s and reconstructed it in new terms derived jointly from Sydney Lamb’s stratificational linguistics and Walter Freeman’s neurodynamics. The point of that work was to provide a framework – I called it “attractor nets” – that would accommodate both the propositional operations of ‘good old artificial intelligence’ (aka GOAFI) and the more recent statistical style. Whether or not or just how attractor nets would do that, well, I don’t really know.

But I was excited about it and wondered what the practical benefit might be. So I ran up a sketch of a natural language front-end for Microsoft PowerPoint, a program I use quite a bit. That sketch took the form of a set of hypothetical interactions between a use, named Jasmine, and the PowerPoint Assistant (PPA) along with some discussion.

The important point is that software programs like PowerPoint are basically closed worlds that exist entirely within a computer. Your ‘intelligent’ system is dealing with a world constructed with a finite number of primitive elements. So you hand-code a basic language capability into the system, then give it the ability to ‘learn’ from its interactions with the user, and you have your basic PPA.

That’s what’s in this sketch, along with some remarks about networked PPAs sharing capabilities with one another. And that’s as far as I took matters. That is to say, that is all I had the capability to do.

For what it’s worth, I showed the document to Syd Lamb in 2003 or 2004 and he agreed with me that something like that should be possible. We were stumped as to just why no one had done it. Perhaps it simply hadn’t occurred to anyone with the means to do the work. Attention was focused elsewhere.

Since then a lot has changed. IBM’s Watson won at Jeopardy and more importantly is being rolled out in commercial use. Siri ‘chats’ with you on your iPhone.

And some things haven’t changed. Still no PPA, nor a Photoshop Assistant either. Is it really that hard to do? Are the Big Boys and Girls just distracted by other things? It’s not as though programs like PowerPoint and Photoshop serve niche markets too small to support the recoupment of development costs.

Am I missing something, or are they?

So I’m putting this document out there on the web. Maybe someone will see it and do something about it. Gimme’ a call when you’re ready.

Wednesday, August 24, 2022

Beyond linear regression: mapping models in cognitive neuroscience should align with research goals

In short, if you plan to train an encoding/decoding model of the brain, you should determine which properties of your mapping are essential to your research question. Does it need to be simple? Biologically plausible? Explainable in terms of neuro/psych terms?

— Anna Ivanova (@neuranna) August 24, 2022

If you're going to #ccneuro22, stop by @leylaisi's poster presenting our work!

— Anna Ivanova (@neuranna) August 24, 2022

Many thanks to @CogCompNeuro GAC organizers for support with this project (@meganakpeters @GunnarBlohm)

Abstract of linked article:

Many cognitive neuroscience studies use large feature sets to predict and interpret brain activity patterns. Feature sets take many forms, from human stimulus annotations to representations in deep neural networks. Of crucial importance in all these studies is the mapping model, which defines the space of possible relationships between features and neural data. Until recently, most encoding and decoding studies have used linear mapping models. Increasing availability of large datasets and computing resources has recently allowed some researchers to employ more flexible nonlinear mapping models; however, the question of whether nonlinear mapping models can yield meaningful scientific insights remains debated. Here, we discuss the choice of a mapping model in the context of three overarching desiderata: predictive accuracy, interpretability, and biological plausibility. We show that these desiderata do not map cleanly onto the linear/nonlinear divide; instead, each desideratum can refer to multiple research goals, each of which imposes its own constraints on the mapping model. Moreover, we argue that, instead of categorically treating the mapping models as linear or nonlinear, researchers should report the complexity of these models. We show that, in many cases, complexity provides a more accurate reflection of restrictions imposed by various research goals and outline several complexity metrics that can be used to effectively evaluate mapping models.

Patterns as Epistemological Objects [2495]

Pattern-matching is much discussed these days in connection with deep learning in AI. Here's a post from July, 2014, where I discuss patterns more generally. [I was also counting down to my 2500th post. I'm now over 8600.]

* * * * *

An observer defines a pattern over objects.

A species defines a niche over the environment.

These two patterns attain reality in a different way. The forces that make the animal’s trail a real pattern are local ones having to do with the interaction between the animal and its immediate surrounding. The forces “behind” the constellations are those of the large-scale dynamics of the universe as “projected” onto the point from which the pattern is viewed.

I’m on a countdown to my 2500th post. This is number 2495.

The deciine of the humanities in college majors

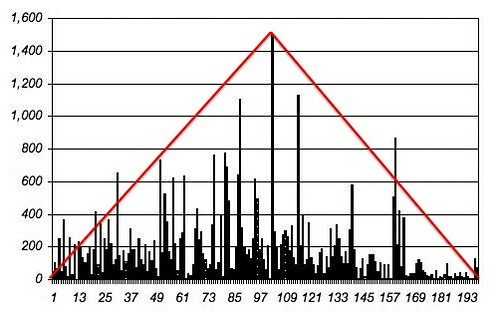

Here's the raw size of all the fields (just BAs). The downtick in cultural, ethnic, and gender studies is notable--those had been the only fields *not* to get pulled down by the collapse of humanities majors. Also sharper-than normal drops in English, Comp Lit, languages... pic.twitter.com/cbecOnl8Ls

— Benjamin Schmidt (@benmschmidt) August 23, 2022

Here's the slightly longer term shifts from 2011-2021. The total outlier of computer science's explosion is really clear here: so is the concentration of growth in fields that have clear career prospects. pic.twitter.com/Pq5nuZveqH

— Benjamin Schmidt (@benmschmidt) August 23, 2022

The downtick in humanities this year pushes up the ETA for when CS is larger than all humanities degrees together to one of the classes currently in college. pic.twitter.com/muaYNhDIe4

— Benjamin Schmidt (@benmschmidt) August 24, 2022

Tuesday, August 23, 2022

Which comes first, AGI or new systems of thought? [Further thoughts on the Pinker/Aaronson debates]

Long-time readers of New Savanna know that David Hays and I have a model of cultural evolution built on the idea of cognitive rank, systems of thought embedded in large-scale cognitive architecture. Within that context I have argued that we are currently undergoing a large-scale transformation comparable to those that gave us the Industrial Revolution (Rank 3 in our model) and, more recently, the conceptual revolutions of the first half of the 20th century (Rank 4). Thus I have suggested that the REAL singularity is not the fabled tech singularity, but the consolidation of new conceptual architectures:

Redefining the Coming Singularity – It’s not what you think, Version 2, Working Paper, November 2015, https://www.academia.edu/8847096/Redefining_the_Coming_Singularity_It_s_not_what_you_think

I had occasion to introduce this idea into the recent AI debate between Steven Pinker and Scott Aaronson. Pinker was asking for specific mechanisms underpinning superintelligence while Aaronson was offering what Steve called “superpowers.” Thus Pinker remarked:

If you’ll forgive me one more analogy, I think “superintelligence” is like “superpower.” Anyone can define “superpower” as “flight, superhuman strength, X-ray vision, heat vision, cold breath, super-speed, enhance hearing, and nigh-invulnerability.” Anyone could imagine it, and recognize it when he or she sees it. But that does not mean that there exists a highly advanced physiology called “superpower” that is possessed by refugees from Krypton! It does not mean that anabolic steroids, because they increase speed and strength, can be “scaled” to yield superpowers. And a skeptic who makes these points is not quibbling over the meaning of the word superpower, nor would he or she balk at applying the word upon meeting a real-life Superman. Their point is that we almost certainly will never, in fact, meet a real-life Superman. That’s because he’s defined by human imagination, not by an understanding of how things work. We will, of course, encounter machines that are faster than humans, and that see X-rays, that fly, and so on, each exploiting the relevant technology, but “superpower” would be an utterly useless way of understanding them.

I’ve added my comment below the asterisks.

* * * * *

I’m sympathetic with Pinker and I think I know where he’s coming from. Thus he’s done a lot of work on verb forms, regular and irregular, that involves the details of (computational) mechanisms. I like mechanisms as well, though I’ve worried about different ones than he has. For example, I’m interested in (mostly) literary texts and movies that have the form: A, B, C...X...C’, B’, A’. Some examples: Gojira (1954), the original 1933 King Kong, Pulp Fiction, Obama’s eulogy for Clementa Pinkney, Joseph Conrad’s Heart of Darkness, Shakespeare’s Hamlet, and Osamu Tezuka’s Metropolis.

What kind of computational process produces such texts and what kind of computational process is involved in comprehending them? Whatever that process is, it’s running in the human brain, whose mechanisms are obscure. There was a time when I tried writing something like pseudo-code to generate one or two such texts, but that never got very far. So these days I’m satisfied identifying and describing such texts. It’s not rocket science, but it’s not trivial either. It involves a bit of luck and a lot of detail work.

So, like Steve, I have trouble with mechanism-free definitions of AGI and superintelligence. When he contrasts defining intelligence as mechanism vs. magic, as he did earlier, I like that, as I like his current contrast between “intelligence as an undefined superpower rather than a[s] mechanisms with a makeup that determines what it can and can’t do.”

In contrast Gary Marcus has been arguing for the importance of symbolic systems in AI in addition to neural networks, often with Yann LeCun as his target. I’ve followed this debate fairly carefully, and even weighed in here and there. This debate is about mechanisms, mechanisms for computers, in the mind, for the near-term and far-term.

Whatever your current debate with Steve is about, it’s not about this kind of mechanism vs. that kind. It has a different flavor. It’s more about definitions, even, if you will, metaphysics. But, for the sake of argument I’ll grant that, sure, the concept of intellectual superpowers is coherent (even if we have little idea about how’d they’d work beyond MORE COMPUTE!).

With that in mind, you say:

Not only does the concept of “superpowers” seem coherent to me, but from the perspective of someone a few centuries ago, we arguably have superpowers—the ability to summon any of several billion people onto a handheld video screen at a moment’s notice, etc. etc. You’d probably reply that AI should be thought of the same way: just more tools that will enhance our capabilities, like airplanes or smartphones, not some terrifying science-fiction fantasy.

I like the way you’ve introduced cultural evolution into the conversation, as that’s something I’ve thought about a great deal. Mark Twain wrote a very amusing book, A Connecticut Yankee in King Arthur’s Court. From the Wikipedia description:

In the book, a Yankee engineer from Connecticut named Hank Morgan receives a severe blow to the head and is somehow transported in time and space to England during the reign of King Arthur. After some initial confusion and his capture by one of Arthur's knights, Hank realizes that he is actually in the past, and he uses his knowledge to make people believe that he is a powerful magician.

Is it possible that in the future there will be human beings as far beyond us as that Yankee engineer was beyond King Arthur and Merlin? It seems to me that, providing we avoid disasters like nuking ourselves back to the Stone Age, catastrophic climate change exacerbated by pandemics, and getting paperclipped by an absentminded Superintelligence, it seems to me almost inevitable that that will happen. Of course science fiction is filled with such people but, alas, has not a hint of the theories that give them such powers. But I’m not talking about science fiction futures. I’m talking about the real future. Over the long haul we have produced ever more powerful accounts of how the world works and ever more sophisticated technologies through which we have transformed the world. I see no reason why that should come to a stop.

So, at the moment various researchers are investigating the parameters of scale in LLMs. What are the effects of differing numbers of tokens in the training corpus and number of parameters in the model? Others are poking around inside the models to see what’s going on in various layers. Still others are comparing the response characteristics of individual units in artificial neural nets with the response characteristics of neurons in biological visual systems. And so and on and so forth. We’re developing a lot of empirical knowledge about how these systems work, and models here and there.

I have no trouble at all imagining a future in which we will know a lot more about how these artificial models work internally and how natural brains work as well. Perhaps we’ll even be able to create new AI systems in the way we create new automobiles. We specify the desired performance characteristics and then use our accumulated engineering knowledge and scientific theory to craft a system that meets those specifications.

It seems to me that’s at least as likely as an AI system spontaneously tipping into the FOOM regime and then paperclipping us. Can I predict when this will happen? No. But then I regard various attempts to predict the arrival of AGI (whether through simple Moore’s Law type extrapolation or the more heroic efforts of Open Philanthropy’s biological anchors) as mostly epistemic theater.

Monday, August 22, 2022

What’s it mean, minds are built from the inside?

I'm bumping this post from September 2014 to the top because it's my oldest post on this topic.

Sunday, August 21, 2022

Willie Nelson listened to a lot of music when he was young, even picked cotton [concordia discors]

Jody Rosen has an interesting article about Willie Nelson, who is approaching 90: Willie Nelson's Long Encore (NYTimes, Aug 17, 2022). From the article:

Abbott, a small town about 25 miles north of Waco, is where Nelson was born, in 1933. When he was 6 months old, his young parents split up, leaving Willie and his 2-year-old sister, Bobbie, in the care of their paternal grandparents. Nelson sees this as a stroke of good fortune. His grandparents, Nancy and Alfred — “Mama and Daddy Nelson” — were devoted and conscientious caretakers. They were also musicians. Mama gave singing lessons from home; Daddy, a blacksmith, played guitar. By the time Willie was 6, he had his first six-string and was learning to play chords and write songs. Bobbie was a piano prodigy who seemed to instantly assimilate new styles; she would become her brother’s enduring musical collaborator and “closest friend for a whole lifetime.”

To grow up in rural Texas during the Depression was to know an existence defined by struggle and want. But musically, Abbott held riches. Willie basked in the hymns at the United Methodist Church. The radio transmitted enthralling sounds, too: the Western swing of Bob Wills and his Texas Playboys, the jazz of Louis Armstrong and Duke Ellington, Tin Pan Alley hits like “Stardust” and “All the Things You Are.” Willie was also captivated by the music he heard at movie matinees, especially the drifter anthems sung by Hollywood cowboys like Gene Autry and Roy Rogers. And he worked alongside his sister and grandmother in the cotton fields, where other songs rang out. “There were a few of us white people out there,” he says. “But over here, there’d be Mexicans singing mariachis. And over there, you’d hear a Black guy singing the blues.” The trumpeter and composer Wynton Marsalis recalls a revealing backstage moment. “It was me, Willie, B.B. King, Ray Charles and Eric Clapton,” he says, all shooting the breeze — “and Willie said: ‘Well, gentlemen, I think I’m the only one here who actually picked cotton.’” Everyone burst into laughter. “Willie has had some profound experiences,” Marsalis says. “His music, his knowledge, comes from a long, long way.”

At 10, Nelson joined a Czech polka band that played beer halls; when he and Bobbie were teenagers, they formed a dance band with Bobbie’s young husband. He graduated from high school in 1950, served in the Air Force for nine months (he received a medical discharge for a bad back), then tried college at Baylor University in Waco before dropping out to pursue music. He married his first wife, Martha, at 19, and had three children in short order. For the next several years, he bounced around the country while working a series of jobs (saddle maker, dishwasher, door-to-door salesman) and honing his craft.

His guitar:

In 1969, Nelson bought a new guitar, a nylon-string Martin N-20, which he fitted with a pickup to produce a tone reminiscent of one of his musical gods, the jazz guitarist Django Reinhardt. He named the guitar Trigger, after Roy Rogers’s horse, and before long his fingers had worn a hole in the soft spruce above its bridge.

Success:

The biggest success was Nelson. “Red Headed Stranger” was his first true hit album. Then, in 1978, came a blockbuster, “Stardust,” a collection of standards that stayed on the country album charts for a full decade, establishing the cowboy warbler as an interpreter of the American Songbook on par with the greatest jazz vocalists. In the years that followed, Nelson reached superstardom, attaining a presence in popular culture that arguably no other country singer has, unless Taylor Swift counts as a country singer. He starred in motion pictures. He visited the White House on numerous occasions. (On one visit, he got high on the roof with President Carter’s son Chip.) He did a public service announcement for NASA alongside Frank Sinatra and had a huge international hit with Julio Iglesias, the oily and absurd “To All the Girls I’ve Loved Before.” He was one of few country artists to join the pop, soul and rock demigods on the charity single “We Are the World.”

Diversity, classically – concordia discors:

Nelson is a scrambler of categories. He’s down-home and urbane, countercultural and traditional, a political progressive who occupies the loftiest perch in America’s most conservative musical genre. (Presumably, many fans in his home state take issue with his endorsement of Beto O’Rourke and his call to support Texas Democrats in their fight against voter suppression.) It’s impossible to name a white performer more steeped in qualities we associate with Black music — syncopation, improvisation, blue notes, the push and pull between sacred and earthly yearnings — yet not a trace of minstrelsy can be detected in his sound. He is always — indubitably, irreducibly — Willie Nelson.

The most striking feature of his career is not length but breadth. There appear to be no songs he can’t sing and few he hasn’t. Though nominally a country artist, he is really more like an American musical unconscious, tapped into the deepest wellsprings of popular song. He has a way of making everything he sings — from “Amazing Grace” and “Danny Boy” to “Time After Time” (the Cyndi Lauper song) and “The Rainbow Connection” (the Kermit the Frog song) — sound Platonic and primordial. The only comparable figures, according to Marsalis, are Ray Charles and Louis Armstrong. “To be great in all the forms that Willie is great in — it’s extremely rare,” he says. “He has whatever that spiritual thing is, that thing you can’t describe. It’s like a shamanistic type of insight into the nature of all things. From that place of understanding, he can play anything he wants to play that comes out of the American tradition.”