Sunday, November 30, 2014

The Codger Web: Virtual Villages Connecting Cyberspace and Meatspace

Conjunctions between virtual space and physical space: retirees, teens, and graffiti writers.

The New York Times is running an article about virtual villages of retires. The members of these virtual villages live in more or less the same geographic area, but the village is not legally incorporated as a village not is it one single piece of real estate. The real estate is distributed, but people share tasks and lives:

Now, Mr. Cloud has all the support he needs. He can tap into Capital City Village’s network of more than 100 service companies referred by members. Dozens of volunteers will walk his dog or do yard work. When he wants to meet people, Mr. Cloud can attend house concerts in a member’s home, go to happy hour at the local Mexican restaurant or hear a champion storyteller give a talk. He has also made over 40 village friends...These villages are low-cost ways to age in place and delay going to costly assisted-living facilities, say experts. Yearly membership dues average about $450 nationally, and most villages offer subsidies for people who cannot afford membership costs. Armies of volunteers, who help run many villages, also help lower member costs by doing yard work, picking up prescriptions or taking members shopping or to the airport.At the core of these villages is conciergelike service referrals for members, said Judy Willett, national director of the Village to Village Network. Members can find household repair services, and sometimes even personal trainers, chefs or practitioners of Reiki, the Japanese healing technique. Most important, the villages foster social connections through activities like potlucks, happy hours and group trips.

The internet provides some of the asynchronous communicative interaction that helps keep these villages together:

Virtual village members stay in touch through village websites and email, or by calling local village offices. Many villages also turn to social media sites like Facebook, Twitter and YouTube to stay in touch, Ms. Willett added.

Saturday, November 29, 2014

Rereading Goethe’s Faust 1: From man to a cosmic epic

I had forgotten that the Faust legend, and hence Goethe’s epic drama, was ultimately based on the life of a real person, Georg Faust. Priest says so in his introduction, and while there were markings or annotations in the introduction indicating that I’d read it – but I’ve marked it in the course of rereading – I surely must have done so. And Prof. Jantz surely would have mentioned the “real” Faust in an early lecture. But that fact simply did not register.

I’ve put “real” in scare quotes because, at this point, Goethe’s creature is far more real than Georg Faust is.

What does it matter that this text from the late eighteenth and early nineteenth centuries should have a place in a genealogy of memes and mentions going back to a life lived in the fifteenth and sixteenth centuries? That real life lived in one era has little bearing on the meaning of a text written in another era, and nothing whatever to do with the devices through which that text is constructed. Georg Faust’s life is no more than background to reading and studying Goethe’s text. No doubt that was why I simply forgot about it.

If I am now remarking on that fact, it is because I have an interest in the mechanisms of culture that I didn’t have back then, my freshman year, when I was, for all practical purposes, a country bumpkin in the big city. It is surely of note that this text, this Faust, started in a life lived, lived during a major transition in Western culture. For the purposes of this post, we’ll say that transition began with the Italian revolution in art that was grounded in the invention of coherent perspective and was completed with the fruition of classical mechanics in the work of Isaac Newton, a period spanning roughly three centuries. The following table lists some representative figures and dates:

Filippo Brunelleschi Invented one-point linear perspective 1377-1446 Nicholas Copernicus Championed the heliocentric view of the solar system 1473-1543 Georg Faust Philosopher, astrologer, prophet, physician 1480-1539? William Shakespeare Playwright often credited with inventing the modern sensibility 1564-1616 Galileo Galilei Astronomer and physicist forced by the Church to recant his advocacy of heliocentrism 1564-1642 Isaac Newton Synthesized the conceptual foundations of classical physics 1642-1746

Georg Faust was a contemporary of Nicholas Copernicus and died more than two decades before Galileo and Shakespeare were born. He lived in a time when the construction of knowledge was being subject to new methods.

Friday, November 28, 2014

Crimes Against Family: What do Bill Cosby and the United States Military have in Common?

I’m sure I watched I Spy a time or three back in the day, but I didn’t watch it regularly. I DID watch The Cosby Show regularly. My all time favorite bit was when the dad and the kids lip synched a Ray Charles song – “The night time, is the right time” – for mother, with little Rudy being particularly delicious. I also appreciated the fact that Cosby would have jazz musicians on the show. His father-in-law was played by a jazz musician, singer Joe Williams. He had Dizzy Gillespie on one show and, much to my delight, he had Frank Foster on another. I’d studied improvisation with Foster when he taught at UB (that is, the State University of New York at Buffalo).

As much as I am a fan of anyone – which isn’t all that much, fandom isn’t how I roll – I was a fan of Cosby’s. I was a bit startled when he started coming down hard on the lifeways of some poor black folks. Understand him, yes. But it seemed a bit harsh, especially in the overall ecology of racial attitudes and discussion. Is that how Cliff Huxtable would speak?

And then he was accused of rape. I don’t recall precisely when I first heard about that, but it was awhile ago, well before the current round of accusations. Was it before or after he’d become Mr. Public Morality? I don’t recall. The accusation certainly didn’t seem consistent with the behavior of Dr. Heathcliff Huxtable. Cliff, of course, was just a fiction, a part Cosby played. But we do tend to identify performers with the parts they play, as though that’s what they are like in real life.

Of course, I know that Cliff was just a role. As a performer myself I’m keenly aware of the difference between one’s performance persona and one’s “real” self. Give me a trumpet and put me on stage with at kick-ass rhythm section and I’m Mister Confident Superhero Sex God John the Conqueror, but in real life I’m shy, reserved, and ridiculously intellectual, though leavened with a bit of wit. So I never actually believed that Cosby played himself when playing Heathcliff, but nonetheless, Heathcliff became my default for Bill Cosby himself.

The upshot: those accusations were dissonant. At this point I don’t recall what I thought of those accusations back then. I’m pretty sure my initial reaction would have been denial. I’m also sure that I thought about it beyond that initial denial. Mostly likely I just put the accusations on a mental shelf without either denying or affirming them in my mind.

That’s not possible now. There are too many accusations. I think he did it.

Thursday, November 27, 2014

Rereading Goethe’s Faust 0: Assessing my life at the dawn of a new era

I read Goethe’s Faust during my freshman year at Johns Hopkins. Prof. Harold Jantz – “one of the foremost Germanists of his generation” – offered a one-credit course in which that was the only book we read. In English translation, of course. Which was the point of the course, a chance to read one of Western literature’s great books in English translation.

Beyond that, I forget just why I decided to take the course. I’ve got a vague memory that this course was highly regarded – like Prof. Phoebe Stanton’s two-semester introduction to art history – and that’s why I took it. If so, I must have taken it in the Spring semester as I would have had no access to such local knowledge for the Fall semester.

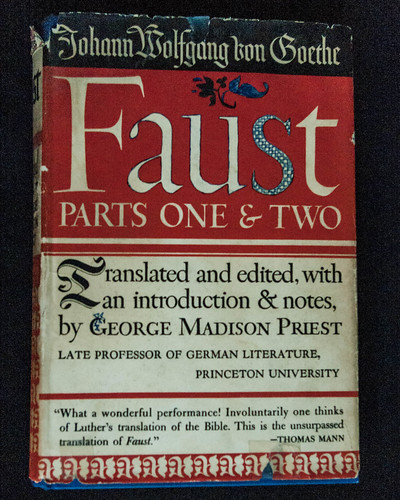

Translated by George Madison Priest

I have little recollection of the course. I believe my friend Peter also took the course, and I remember that Jantz was a relatively short soft-spoken man. I even have a vague memory of a relatively short man in a medium brown tweed suit, with a part in his hair, though he was balding in front. But I wouldn’t put any money on that recollection.

I’m pretty sure I enjoyed the course, but I have no specific memories of it. All I’ve got are these vague memories – but who knows what I would recall under hypnosis? – and the book itself.

We used a 1941 translation by George Madison Priest. Judging from the front matter, it is a revision of a translation that had originally been published in 1932. Priest was born in Kentucky in 1873. He got his bachelor’s and master’s degrees at Princeton in 1894 and 96 at Princeton and then got his doctorate at Jena in 1907.

This translation is thus a very Old School production, Ivy League and the Continent. And one that was praised by no less than Thomas Mann. That’s his endorsement down there at the bottom:

It’s not even in print these days – but you can download it for free from Archiv.org – and it wasn’t mentioned at all when I did a web search on the question, “What’s the best English translation of Goethe’s Faust?” Was Thomas Mann’s evaluation wrong? Have fashions changed in translation? Though I don’t actually know this, the latter is likely the case. But the translation still might be a good one by some credible criterion, just a bit old. As I can’t read German I’m in no position to second-guess Thomas Mann.

Wednesday, November 26, 2014

Culture will have Arrived as an Area of Serious Study when

... things like truth, love, beauty, and justice are widely recognized as core human needs and motivators, as important as the need for food, water, shelter, and sex. Oh, I know, there are those who already recognize the importance of those motivations. But they’re “fuzzy humanists” who aren’t taken seriously by more “realistic” thinkers like biologists, evolutionary psychologists, economists, and bankers.

To the extent that those “rigorous” thinkers – think rigor mortis – recognize truth, love, beauty and justice, they try to reduce them to some variety of rational self-interest in service of genes and power.

Those people don’t know what they’re talking about. Alas, they run the world, or are attempting to do so. In the end the world will defeat them, but they make take the rest of us down with them.

And Scotty said, "Computer, tell us a story"

And so the computer did. Sorta.

November is NaNoGenMo (National Novel Generation Month), started last year by Darius Kazemi, says The Verge.

Nick Montfort’s World Clock was the breakout hit of last year. A poet and professor of digital media at MIT, Montfort used 165 lines of Python code to arrange a new sequence of characters, locations, and actions for each minute in a day. He gave readings, and the book was later printed by the Harvard Book Store’s press. Still, Kazemi says reading an entire generated novel is more a feat of endurance than a testament to the quality of the story, which tends to be choppy, flat, or incoherent by the standards of human writing."Even Nick expects you to maybe read a chapter of it or flip to a random page," Kazemi says.Narrative is one of the great challenges of artificial intelligence. Companies and researchers are working to create programs that can generate intelligible narratives, but most of them are restricted to short snippets of text.

And a good thing, too.

Tuesday, November 25, 2014

Policy, Strategy, Tactics: Intellectual Integration in the Human Sciences, An Approach for a New Era

Roughly three decades ago, in my final year on the faculty at the Rensselaer Polytechnic Institute, I participated in an exercise to rethink the School of Humanities and Social Sciences. I took that as an opportunity to, you know, actually rethink how education was done. So I designed a modular approach to structuring an introductory interdisciplinary course in the humanities and social sciences.

At that time online learning didn't exist. But the modular approach I developed back then could certainly be applied to connected learning and co-learning could certainly be incorporated into course design as well. It is in that spirit that I direct you to that document, Policy, Strategy, Tactics: Intellectual Integration in the Human Sciences, An Approach for a New Era. I've appended the 21st century introduction I wrote for that 20th century document.

* * * * *

Remarks from the 21st Century

My first and only faculty appointed was in the Department of Language, Literature, and Communication at the Rensselaer Polytechnic Institute in Troy, New York, a very good engineering school, the oldest in the country. During the summer of 1985 the school of Humanities and Social Sciences embarked on one of those periodic soul-searching exercises academic units undertake in order to revitalize their mission and–hope! hope!–increase the budget. Accordingly, the school offered a number of faculty small stipends to develop innovative brand-spanking new courses that they would present at a faculty retreat.

I took one of those stipends despite the fact that I would not be returning in the Fall and developed, not a course, but an approach to curriculum design which is camouflaged as a strategy for designing a large lecture-based introductory course. I understand that such courses have been getting a bad rap, and I even understand why, I think. Nonetheless if I had to do it again in this new millennium I would. But that’s neither here nor there.

What’s important is the method I used. It’s a method that could be used in designing any course in the human sciences whatsoever, though its interdisciplinary nature is particularly suited to the large introductory course as that’s the kind of course the could most readily command the participation of faculty from a half-dozen or more disciplines. But the method could also be used in planning a suite of modules to be offered online and which individual students could organize into individualized programs that nonetheless met a coherent set of overall curricular goals.

The scheme is designed to organize materials according to three high-level criteria:

- interpretive (hermeneutic), social and behavioral scientific, and structural (in the style of linguistics) approaches are all represented,

- historical (diachronic) and structural/functional (synchronic) approaches are represented,

- material from other, preferably non-Western, cultures is presented.

Thus each module would employ either an interpretive, a social scientific, or a structural methodology and would be either historical or structural/functional in character. A student’s suite of modules would have to represent each of the three methodological styles, include both diachronic and functional topics and include materials from a range of different cultures.

This, I know, is all rather abstract. But I flesh it out in the full report by designing two versions of a course, Signs and Symbols, having 12 modules. I call one-version of the course “top-down” because it is organized in a fairly conventional way as a selection of different topics under the general rubric of sign systems and communications. That is, it proceeds from some conception of how knowledge is structured and generates topics from that, top-down. Obviously there are a zillion ways of designing such a course and no double half of them have already been offered at one time or another. What’s important is the overall distribution of topics, not the specific topics themselves.

The other version of the course is rather different. I selected a specific text, Shakespeare’s Romeo and Juliet, and generated the 12 modules from that text. The modules are somewhat different from those in the top-down version of the course but they satisfy the same distribution requirements, covering the three methodologies (interpretive, social scientific, structural), both diachronic and functional topics, and a variety of cultures. Were I to redesign that course today I’d be inclined to swap Disney’s Fantasia for Shakespeare’s Romeo and Juliet. The collection of different topics would change, of course, but the same design criteria would be met.

Formal Citation for Blog Entries

How do you cite a blog post in formal academic writing? Martin Fenner at PLOS tells how (from 2011, ancient times in web-years). APA, MLA, Chicago, BibTeX. H/t Richard at Replicated Typo.

Addendum: APA style, for websites in general. H/t Laura Ritchie.

Addendum: APA style, for websites in general. H/t Laura Ritchie.

Monday, November 24, 2014

Pedagogical Styles 3: Courses I have taught (or taken)

Now that I’ve put some ideas on the table – Vygotsky, coaching, lecturing – I want to describe four different courses, three of which I’ve taught, one that I took. All of that took place in the ancient days before personal computing and the web. My objective is simply to get four different kinds of courses together in one document.

What affordances to these course have for co-learning?

Freshman Comp

I was trained in English literature, which means that, like just about everyone with such training, I also had to teach composition – first, to earn my tuition while getting the degree and then when I got my first (and only) teaching job. The fact is that Ph. D. training in literature doesn’t even train you to teach literature – at least it didn’t back in those days – much less train you to teach writing, with is an entirely different kind of activity. The two have only one thing in common: the written language.

One consequence of this disparity is that many a freshman comp course has been taught as a “content” course that just happens to have a lot of writing assignments, generally weekly. Between graduate school and my faculty job at RPI (Rensselaer Polytechnic Institute) I taught freshman comp, say, a half dozen or more times. I doubt that I taught it the same way twice and I forget what I did most of the times I taught the course.

But, one time at RPI I taught the course using a reader – a common thing to do. I forget which of the many such readers I used, but, like all of them, it had a large selection of pieces, both fictional and not, which you could use as the basis of a writing assignment. The weekly assignment, then, is simple: Read such and such a selection and write such and such a piece based on what you read. I’m sure I had some strategy with which I selected the weekly assignments, and I’m sure I allowed various alternatives as well, but I don’t remember that.

As I recall, the major difficulty was in making useful comments about student writing, where the problems ranged from grammar and spelling to theme, organization, and logic. I often thought that it would have been easier for me simply to re-write a sentence or paragraph than to explain what was wrong, why it was wrong, and how to do better. I note in passing that this was before the days of personal computers, much less before the time when every student had one.

I came away from this experience with two general impressions: First, what you need to do to learn to write is, above all, to write, a lot. More than you do in a one-semester composition course. Writing a lot may even be more important and useful than having an instructor make sometimes helpful sometimes obscure remarks on your paper. Second, this really would go better with weekly one-hour one-on-one tutorial sessions, like music lessons.

From Multiple Personalities to Dissociative Identity Disorder

Below the asterisks I've copied some remarks about this disorder from my article, First Person: Neuro-Cognitive Notes on the Self in Life and in Fiction, PSYART: A Hyperlink Journal for Psychological Study of the Arts, August 21, 2000.

* * * * *

There is another aspect of the neural self, one that has to do with the continuity and coherence of the representation. We can approach this issue by considering dissociative identity disorder (DID), an extreme pathology in which the neural self is fractured. In DID, also known as multiple personality disorder, one biological individual exhibits several different identities, each having different memories and personal style. In Thigpen and Cleckley's (1957) classic study Eve had three personalities; Schreiber's (1973) Sybil had sixteen (see also Rappaport 1971, Stoller 1973). Although there has been some controversy over whether or not DID is real or simply the effect of zealous therapeutic invention and intervention, there is no doubt that at least some cases are genuine (Schachter 1996, 236-242, Spiegel 1995, 135-138).

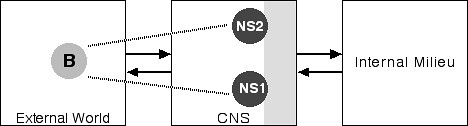

These different identities have different personal histories. The events in one personal history typically are unknown to the other histories. Each identity will have blank periods in its history, intervals, obviously, where another identity was being enacted. And the different "persons" are often unaware of one another. Further, the different identities seem to have different personal styles, different modes of speech, of movement, of dress, and so forth. Thus both the core and autobiographical selves seem to be riven. Using the conventions we employed above, Figure 7 is a simple depiction of DID:

Figure 7: Dissociative Identity Disorder

Notice that we now have two neural selves, NS1 and NS2, corresponding to two different identities. Of course, these two selves exist in the same body, so we have only one corresponding body in the external world.

We do not, so far as I know, understand why or how DID happens. It is not, however, the result of the sort of gross destruction of brain tissue that underlies anosognosia. One might imagine, for example, that the different selves reside in distinctly different patches of neural tissue, a speculation that Damasio (1999b, 355) himself has suggested for the autobiographical self (though he presents no evidence). This suggestion, however, has at least one problem: How does the nervous system switch from one identity to another? There is another way of explaining DID, equally speculative and equally without specific evidence, that eliminates this particular problem.

Robert Boyd on Cooperation

Why do humans cooperate in ways that other mammals don't? In the non-human world almost all cooperation can be explained in terms of genetic relatedness between the cooperators (via kin selection or reciprocal altruism). Humans are different. There is a great deal of cooperation between people who aren't genetically related.

H/t Tim Tyler.

Sunday, November 23, 2014

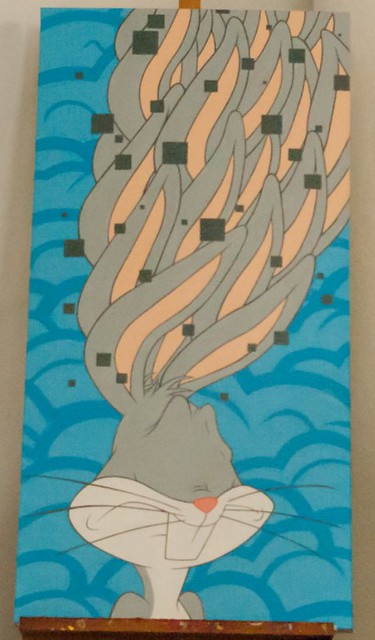

Street Aesthetics: Why Jerkface is Killin’ It

Three things are distinctly odd about these images, both by Jerkface – as you see, Bugs Bunny is on canvas; Sylvester is on a wall. Neither face has eyes, and both pictures appear to be “pierced” by a pattern of squares, and Bugs has multiple ears and Sylvester has multiple paws. Note that the pattern of squares doesn’t respect the distinction between foreground and background – well, in the Bugs Bunny it almost does, but it definitely doesn’t in the Sylvester.

Let’s start with the squares. As a comparison, let’s look at this graffiti piece:

In typical fashion the name dominates the image. What interests me are those rectilinear forms walking over the surface. The name-form is bordered in orange and we orange rectilinears merging into that outline, with a few free of it. The blue and green rectilinears within the name-form work a bit differently. Some of them respect the letter-form boundaries, but some extend beneath those boundaries while others go over it.

The overall effect is similar to that of the square’s in the Jerkface images. In all three cases we’ve got to quasi-independent image “logics” that intersect one another in the image plane. One is figural – cartoon characters for Jerkface, a name for the graffiti piece – and the other is a relatively simple geometric pattern.

Saturday, November 22, 2014

Interdisciplinarity is the Utopian Dream of an academic culture whose time is rapidly running out

I was looking around over at danah boyd’s joint, apophenia, and came across an old post: identity crisis: the curse/joy of being interdisciplinary and the future of academia. Ah yes, by all means interdisciplinary, what’s not to like?

The Romance of Interdisciplinarity

Here’s the penultimate sentence of her first paragraph: “The last big explosion was really the French scholars circling around in 1968.” Well for me the year is 1966, that’s when much the same group of French scholars landed at Johns Hopkins in Baltimore at the (in)famous structuralism conference. While I didn’t attend the conference – wouldn’t have done me any good, as I don’t speak French – I was at Hopkins at the time and one of the conference organizers, Dick Macksey – though in those days it was “Dr. Macksey” – was my mentor.

And here’s the opening sentence of boyd’s last paragraph: “So, if i think about what the next revolution in academia will be, it will have to be interdisciplinary.” But, you see, that 1966 structuralism conference was sponsored by Ford Foundation money and that money was specifically interested in interdisciplinarity. That’s what that conference was about. And that’s what the newly-founded Humanities Center, which hosted those Frenchmen, was about.

How is it, then, that forty years after that conference danah boyd is looking to the ever-lovin’ future for interdisciplinarity? If interdisciplinarity really was the future, as seen from 1966, why is it still the future, both in 2005 (when boyd published that post) and even near the end of 2014 as I sit here at 6AM writing this post?

Question: What happened to the interdisciplinary future?

Answer: It’s floated down the river Styx into the 19th century past.

There has in fact been a great deal of interdisciplinary work since 1966, but it’s just not reflected in the names of academic departments. What you have are interdisciplinary centers of all kinds. Each draws on faculty from several departments and picks up funding wherever spare change drops off departmental tables here and there. But these “centers” don’t have the power to confer degrees. That power still remains with the academic departments, and they’re a legacy of 19th century academia.

What we have, then, is departments that are still committed to traditional avenues of investigation and still, for the most part, cranking out scholars most of whom see little choice but to color within those lines. But you also have folks like boyd, or Mark Changizi, or even a Steven Pinker who don’t play by those rules and consequently are frustrated. Boyd’s not in academia – or only marginally so – Changizi left a couple of years ago; and Pinker spends half his time writing for the general educated public.

Of course, boyd knows this.

Friday, November 21, 2014

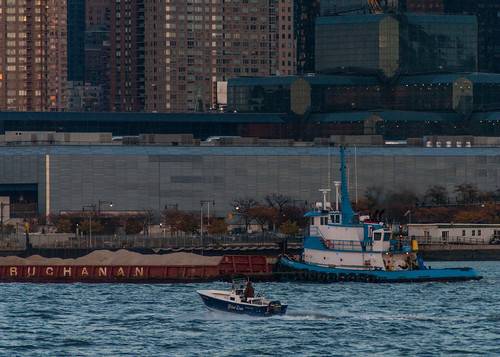

Friday Fotos: New York's Times Square from the Jersey Side

Obviously you can't see Times Square from across the Hudson River in Hoboken, New Jersey. But you can see the tops of buildings around it.

Why Cultural Evolution Needs a Distinction Between “Genes” and “Phenotypes”

I’m thinking I’m about to burn out on cultural evolution, so this will be relatively short and informal.

* * * * *

Ever since I began thinking about a Darwinian process for cultural evolution back in the mid-1990s I’ve insisted on making a distinction between phenotypic entities (which I’m now calling “phantasms”) and genotypic entities (which I’m now calling “coordinators”). Why? My basic reason was to preserve the analogy between the cultural evolutionary process and biological.

That’s understandable, and it was a reasonable thing to do – back then. But there’s been a great deal of discussion about whether or not such a distinction needs to be made for cultural evolution, and if so: how do we make it? Some thinkers, like Dennett and Blackmore don’t make such a distinction at all, being content to theorize about memes, which are thus more like viruses than genes. To be sure, they’ve not gotten very far, nor for that matter has anyone else. But still, the issue must be faced, for there needs to be a better reason for such a distinction than the mere logic of analogy.

After all, what if the underlying logic of cultural evolution is different from that of biological evolution? What if there is no distinction comparable to the genotype-phenotype distinction?

My contention is that there is such a distinction and I’m now prepared to offer a reason for it:

Culture resides in people’s minds and the mind is in the head. We cannot read one another’s minds.

The environment to which cultural entities must adapt is the collective human mind. That’s been clear to me for a long time. And, of course, various conceptions of collective minds have been around for a long time as well. The problem is to formulate a conception in contemporary terms, terms which admit of no mystification.

I did that in the second and third chapters of Beethoven’s Anvil (2001) where I argued that when people make music together, and dance as well, their actions and perceptions are so closely coupled that we can think of a collective mind existing for the duration of that coupling. There are no mystical emanations engulfing the group. It’s all done through physical signals, electro-chemical signals inside brains, visual and auditory signals between individuals.

In this model the genetic elements of culture are the physical coordinators that support this interpersonal coupling. These coordinators are the properties of physical things – streams of sound, visual configurations, whatever – and as such are in the public sphere where everyone has access to them. Correspondingly, the phenotypic elements are the mental phantasms that arise within individual brains during the coupling. These phantasms are necessarily private though, in the case of music making, each person’s phantasm is coordinated with those of others.

If those phantasms are pleasurable – I defined pleasure in terms of neural flow in chapter four of Beethoven’s Anvil – then people will be motivated to repeat the activity and those phantasms will thus be repeated. One of the factors that lead to pleasure is precisely the capacity to share the experience with others. The function of coordinators is to support the sharing of activities and experiences. Just as genes survive only if the phenotypes carrying them are able to reproduce, so coordinators survive only if they give rise to sharable phantasms.

Culture is sharable. That’s the point. If it weren’t sharable it couldn’t be able to function as a storehouse of knowledge and values.

* * * * *

That, briefly and informally, is it. Obviously more needs to be done, a lot more. I can do some of it, though not now. But much of the heavy lifting is going to have to be done by people with technical skills that I don’t have.

Thursday, November 20, 2014

Co-learning Models: Meta-Cognition, Democratic Schools, and Entrepreneurs

I have some brief reactions to three of the topics that came up in last evening’s Connected Courses webinar (with Cathy Davidson & 3 of her students, David Preston and a students, Mia Zamora, Howard Rheingold, & Alec Couros), Successful Co-learning Models: 1) metacognition, 2) before higher ed, and 3) start-ups.

Metacognition

Cathy Davidson talked about how she had students write their own letters of evaluation and how the things they wrote surprised and, I gather, delighted her. Starting about 15:50 in:

... self-knowledge, or learning how I thought, Howard would call it metacognition, those introspective words were the words that came up more in people’s self-evaluation or what they learned, even if they were talking about project learning, than I ever in any other way would have thought about, and I’ve never see that in the literature. Where somehow learning how to coll[borate]…putting together what everyone has said. Learning to work with others makes you think about who you are. I mean, that makes sense as social creatures that it would. But it was fascinating to read those letters.

Yes. If you are going to learn to learn, then you need to think about your learning process, you need to think about how you think.

Putting my Piagetian hat on I note that Piaget argued that later cognitive stages bootstrapped over earlier stages through a process he called reflective abstraction. The mechanisms of cognition at stage N become the objects of cognition at N+1. Is that process what those students were reporting in their self-evaluations?

There is, of course, a Vygotskian angle as well, which Davidson indicated when she noted: “Learning to work with others makes you think about who you are.” As students query one another and respond to those queries they come to internalize the query function, which serves as a bootstrap mechanism.

And if, as Davidson suggested, this isn’t in the literature, then here’s a whiz-bang dissertation project for someone ready to take it on.

Wednesday, November 19, 2014

Green Villain Plots Galactic Skywriting Program from Secret R&D Lab on the Outskirts of Jersey City

Green Villain has enlisted the services of Bradley Ehrsam to craft the technology that will take it out of this world. These hyper-flux transducers have two modes of operation:

In afferent mode they detect those subtle fluctuations in The Force indicating the existence of opportunities for aesthetic intervention by any means necessary. In efferent mode they provide power for Green Villain's fleet of interstellar transport vehicles:

And here's the GV nerve center...

Learning to Learn and Co-learning, NOW

I have a few remarks in response to the recent dialog with Howard Rheingold, Mia Zamora, Alec Couros, Lee Skallerup Bessette, Charlotte Pierce, and Joe Corneli on Co-learning and Authority. What interests me is the question that Mia Zamorra brought up at the end (47:43): Why co-learning now?

But I don’t want to go there directly. Let’s meander just a bit. In the following remarks I’m thinking out loud. There’s a lot of guessing and surmising. But I bring in a bit of concrete evidence at the end.

Learning and Acquisition

The question of how young kids learn came up early in the conversation. Learn what? I ask because one of the things Noam Chomsky did was change how we think about language learning. He observed that children don’t “learn” language in the ordinary sense of the term, which implies deliberate focused activity. They just “hoover” it up; that is, there is little or no deliberate learning or, for that matter, instruction. “Acquisition” has become the term of art, “first-language acquisition.”

Second language learning in adulthood, in contrast, is learning in the ordinary sense. So is learning arithmetic, or, for that matter, learning to ride a bicycle. What’s the underlying difference between “natural” acquisition and effortful learning?

I suspect there is something of a continuum between the two modes and that acquisition tapers off after adolescence, when brain maturation is almost complete (there’s incremental growth into the early twenties). Further, we don’t really engage children in sustained effortful learning until formal schooling begins at six or so. In Piagetian terms, formal learning begins with the concrete operations period of cognitive growth and acquisition ends with the commencement of formal operations.

We might put the issue in a broader perspective: Is focused effortful learning uniquely human or is it widespread among animals? I’m going to guess that, with a major qualification, it’s uniquely human. The qualification is that humans can and do train animals to do all sorts of things, and that training is effortful learning.

Tuesday, November 18, 2014

Marc Andreeson on Online Education

New York Magazine interviews Marc Andreeson, co-founder of Netscape turned venture capitalist. Here's what he says about online education, with some skeptical remarks of my own at the end:

You could probably bring in the whole online-education movement. But for me, the question is, who does the best with online schooling? And it’s mostly autodidacts, people who are self-starters. They’ve found that people from low-income communities actually get the least out of it.It’s way too early to judge, because we’re at the very beginning of the development of the technology. It’s like critiquing dos 1.0 and saying that this will never turn into the Windows PC. We’re still in the prototype experimental phase. We can’t use the old approach to teach the world. We can’t build that many campuses. We don’t have the space. We don’t have money. We don’t have the professors. If you can go to Harvard, go to Harvard. But that’s not the question. The question is for the 14-year-old in Indonesia staring at a life of either, like, subsistence farming or being able to get a Stanford-quality education and being able to go into a profession.The one other thing that people are really underestimating is the impact of entertainment-industry economics applied to education. Right now, with MOOCS, the production values are pretty low: You’ll film the professor in the classroom. But let’s just project forward. In ten years, what if we had Math 101 online, and what if it was well regarded and you got fully accredited and certified? What if we knew that we were going to have a million students per semester? And what if we knew that they were going to be paying $100 per student, right? What if we knew that we’d have $100 million of revenue from that course per semester? What production budget would we be willing to field in order to have that course?You could hire James Cameron to do it.You could literally hire James Cameron to make Math 101. Or how about, let’s study the wars of the Roman Empire by actually having a VR [virtual reality] experience walking around the battlefield, and then like flying above the battlefield. And actually the whole course is looking and saying, “Here’s all the maneuvering that took place.” Or how about re-creating original Shakespeare plays in the Globe Theatre?

Monday, November 17, 2014

Urban Geometry: Light and Shadows

This is a shot of Manhattan's West side:

What interests me is the play of light and shadows. I took the shot in the mid-afternoon with the sun to the South (left). It was a bright day and the sun cast dramatic shadows. Notice, however, the dappled effect on the north wall of the building. That's light being reflected from the windows of a building that we can't see somewhere to the left.

The shadows are so dramatic that they create a visual rhythm that cuts across the rhythm formed by the structures themselves. That effect is more dramatic when i use photoshop to flatten the image:

In the next image I've converted the shot to a gray-scale image:

It’s Time for America to Reinvent itself Top to Bottom

But that’s not what I titled this month’s article at 3 Quarks Daily. I gave it a somewhat more provocative title, American Craziness: Where it Came from and Why It Won’t Work Anymore. The craziness is why America has to reinvent itself.

The core of my argument somes from an article I read in my freshman year at Johns Hopkins, “Certain Primary Sources and Patterns of Aggression in the Social Structure of the Western World” (full text online HERE). Parsons argues that life in Western nations generates a lot of aggressive impulses that cannot, however, be satisfied in any direct way. Why not? Because Western society is highly hierarchal and there is a great deal of aggression from superiors against inferiors, who cannot, however, respond in kind because to do so would be dangerous.

What, then, can those social inferiors do with their aggression? Well, they can let it rot their spirit and, eventually, their bodies as well. And that does happen. But they can also direct their aggression at external enemies. That happens as well.

This has certainly been the case in America. The Cold War was more of a psychic release mechanism for the nations involved – America included – than it was a collision of rational foreign policies East and West. But, as I point out in the 3QD piece, American had developed a sophisticated variation on the mechanism that was organized around slavery.

The institution of slavery in effect gave America an internal colony against which white Americans could direct their aggressive impulses. And when slavery was banished, institutionalized racism kept that colony in place. While the Civil Rights movement certainly changed the legal parameters of that social mechanism, and had real and beneficial effects in the world, the mechanism is still alive.

But, really, as I argue in the 3QD piece, this baroque contraption is ready to fall apart, hence the deadlock in America’s national politics.

I do something else in that piece, however, something of a more theoretical nature. I push Parsons’ argument a bit further than he did. As his title notes, he was arguing about Western nations, not nations in general. Yet anyone who finds his argument convincing can see that the mechanisms he describes are not confined to the West. They’re ubiquitous.

What if, I suggest, that mechanism is built into the fundamental cultural psychodynamics of the nation state? For Parsons there is the nation, on the one hand, and, incidentally, there is this strange psycho-cultural dynamic that happens in Western nations. In his argument that dynamic is not intrinsic to the nation-state, it is merely incidental. I argue that, on the contrary, that projective dynamic is intrinsic to the nation-state; it is how the nation state works.

Saturday, November 15, 2014

Intention and Reading: Red Chair and Masters of the Universe

What’s going on in that photograph? I know, it’s subjective; you see things differently than I. But I shot it, so I ought to know, but do it?

The thing is, photographs are most often complex objects – that one certainly is – so there’s lots to see. I may have taken that shot, and I certainly had something in mind when doing it – but that hardly implies that I was or even now am aware of all that’s there.

But does all that’s there matter?

What I mostly had in mind was the dramatic contrast between the red of the chair and, well, everything else.

I also thought of “The Red Wheelbarrow” of William Carlos Williams. There’s no wheelbarrow in the shot, but, red, yes indeed! And one might see a similarity of shape between the wheelbarrow and the chair, where the back of the chair corresponds to the handle and the seat, arm rests, and legs correspond to the body.

One might see that. Did you? Does it matter?

[Not so much, I think, not so much.]

But, really, that was only in passing, yet given the cultural importance of Williams and the fuss that's been made over that particular poem, that flicker of a thought is of interest.

There’s obviously a lot more going on in that picture. I am not unaware of the identity of those buildings in the background, and the fact that they are not in focus does little to hide their identity. Whether or not you recognize them depends, of course, on your general knowledge of major cities, one city in particular.

Whatever your knowledge, of course you recognize that there’s a city in the background and that it’s on the other side of a body of water. That’s right there in the photo and requires no special knowledge of anything in particular, except of (large) cities – knowledge not universally shared among humans, even in these days of internets and cell phones.

The city, of course, is New York City, and that’s lower Manhattan. But that’s not quite the most identifiable part of NYC’s skyline. That would be mid-town, with its iconic Empire State Building. Lower Manhattan used to have the Twin Towers of the World Trade Center – love ‘em or not – but they fell over a decade ago. That building you see in the center is what replaces them. That building’s not so well recognized, though the controversy surrounding it has certainly been in the news.

You may recognize that building, and it’s location, without quite recognizing that district as Wall Street, New York’s financial district. That’s where the self-styled Masters of the Universe run things, or so they think. That’s where fortunes are made and economies wrecked.

Friday, November 14, 2014

Pedagogical Styles 2: Lectures, and beyond…

It’s time to follow up my previous pedagogical post, on music and Socrates (that is, coaching and midwifery), with a one on lecturing, a rather different technique. Lecturing became associated with university study in the days before printing and so is, to some extent, a substitute for private ownership of cheap books. Instead of buying a good book on some subject, or at least borrowing one from the local library, the student would attend a course of lectures at a university.

The Lecture

Here’s what the Wikipedia article on lectures has to say:

The practice in the medieval university was for the instructor to read from an original source to a class of students who took notes on the lecture. The reading from original sources evolved into the reading of glosses on an original and then more generally to lecture notes. Throughout much of history, the diffusion of knowledge via handwritten lecture notes was an essential element of academic life.

Even in the twentieth century the lecture notes taken by students, or prepared by a scholar for a lecture, have sometimes achieved wide circulation (see, for example, the genesis of Ferdinand de Saussure's Cours de linguistique générale).

That last example is worth a thought or two. I’m one of many who’ve read those Saussure notes; they’ve been standard in certain courses of study. These days, of course, the selling of lecture notes has become a business, as I’ve noted in an earlier post. We’ll get back to lecture notes shortly.

Before that, however, this passage from The Rise of Universities (by Charles Homer Haskins; Henry Holt and Company, New York; 1923; pp. 37-78) puts some flesh on the Wikipedia passage and is worth a quick skim:

The teachers of the thirteenth century who talk most about themselves are the professors of grammar and rhetoric like Buoncompagno at Bologna, John of Garlande at Paris, Ponce of Provence at Orleans, and Lorenzo of Aquileia at Naples and almost everywhere, but we shall make sufficient acquaintance with their inflated writings in other connections. More significant is the account which Odofredus gives of his lectures on the Old Digest at Bologna:

“Concerning the method of teaching the following order was kept by ancient and modern doctors and especially by my own master, which method I shall observe: First, I shall give you summaries of each title before I proceed to the text; second, I shall give you as clear and explicit a statement as I can of the purport of each law [included in the title]; third, I shall read the text with a view to correcting it; fourth, I shall briefly repeat the contents of the law; fifth, I shall solve apparent contradictions, adding any general principles of law [to be extracted from the passage], commonly called ‘Brocardica,’ and any distinctions or subtle and useful problems (quaestiones) arising out of the law with their solutions, as far as the Divine Providence shall enable me. And if any law shall seem deserving, by reason of its celebrity or difficulty, of a repetition, I shall reserve it for an evening repetition, for I shall dispute at least twice a year, once before Christmas and once before Easter, if you like.

“I shall always begin the Old Digest on or about the octave of Michaelmas [6 October] and finish it entirely, by God’s help, with everything ordinary and extraordinary, about the middle of August. The Code I shall always begin about a fortnight after Michaelmas and by God’s help complete it, with everything ordinary and extraordinary, about the first of August. Formerly the doctors did not lecture on the extraordinary portions; but with me all students can have profit, even the ignorant and new-comers, for they will hear the whole book, nor will anything be omitted as was once the common practice here. For the ignorant can profit by the statement of the case and the exposition of the text, the more advanced can become more adept in the subtleties of questions and opposing opinions. And I shall read all the glosses, which was not the practice before my time.” Then comes certain general advice as to the choice of teachers and the methods of study, followed by some general account of the Digest.

This course closed as follows: “Now gentlemen, we have begun and finished and gone through this book as you know who have been in the class, for which we thank God and His Virgin Mother and all His saints. It is an ancient custom in this city that when a book is finished mass should be sung to the Holy Ghost, and it is a good custom and hence should be observed. But since it is the practice that doctors, on finishing a book should say something of their plans, I will tell you something but not much. Next year I expect to give ordinary lectures well and lawfully as I always have, but no extraordinary lectures, for students are not good payers, wishing to learn but not to pay, as the saying is: All desire to know but none to pay the price. I have nothing more to say to you beyond dismissing you with God’s blessing and begging you to attend the mass.”

Important as was the formal lecture in those days of few books and no laboratories, it was by no means the sole vehicle of instruction. A comprehensive survey of university teaching would need also to take account of the less formal ‘cursory’ or ‘extraordinary’ lectures, many of them given by mere bachelors; the reviews and ‘repetitions,’ which were often given in hospices or colleges in the evenings; and the disputations which prepared for the final ordeal of maintaining publicly the graduation thesis.

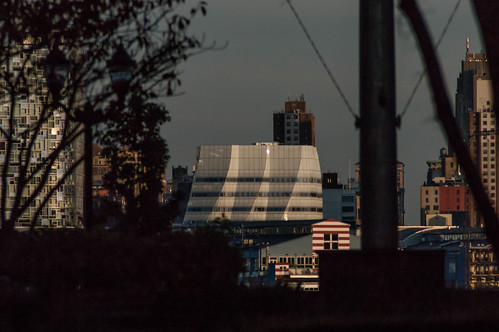

Friday Fotos: The intersection of dreams and reality in light

I took all of these photos on the same walk, yesterday evening along and near the shore in Hoboken, using more or less the same technique, a long-exposure in I zoomed the lens and moved the camera. The result is that here and there you can make out definite objects – in some cases more, some cases less – but they are distorted and obscured. This photo is perhaps the easiest to read:

That's One World Center in the middle. From that the rest pretty much follows. The bright streaks in the foreground are street lights on the Hoboken side of the river.

This is the same kind of shot, but the target building is on the Hoboken side of the river:

Thursday, November 13, 2014

Dennett's Santa Fe Workshop on Cultural Evolution

In May of this year philosopher Dan Dennett convened a workshop on cultural evolution at the Santa Fe Institute. The following were in attendance: Susan Blackmore, Robert Boyd, Nicolas Claidière, Peter Godfrey-Smith, Joseph Henrich, Olivier Morin, Peter Richerson, Dan Sperber, Kim Sterelny. While the workshop has not issued any formal proceedings an informal report has been posted at the International Cognition and Culture Institute (ICCI). Dennett has prepared a summary of the discussions and each individual discussant has also prepared summary remarks. Dennett notes that there are terminological issues:

Three frustrating terminological problems were exposed, but we didn’t resolve how to correct them: “cultural group selection,” “meme,” and “Darwinian” are all good terms, historically justifiable and useful in context, but by now all are so burdened with legacies of ideological conflict that any use of them invites misbegotten “refutation” or dismissal. Should we abandon the terms in favor of emotionally inert replacements, or should we persist with them, always accompanying their use with a wreath of explanation? These are questions of diplomacy or pedagogical policy, not serious theoretical issues, but still, alas, unignorable.

These are the points of consensus that Dennett has identified:

1. We should be Darwinian about Darwinism; there are few if any bright lines between phenomena of cultural change for which cultural natural selection is clearly at work and phenomena of cultural change that are not at all Darwinian. The intermediate and mixed cases need not be marginal or degenerate, a fact nicely portrayed in Godfrey-Smith’s Darwinian Spaces.

2. Models must always “over-“simplify, and the existence of complications and even “counterexamples” relative to any model does not automatically show that the model isn’t valid when used with discretion. For instance, the absence of explicit treatment of SCM’s [Sperber, Claidière, Morin] “hetero-impacts” in BRH’s models “does not amount to a denial of its importance”(Godfrey-Smith). Grain level of modeling and explaining can vary appropriately depending on the questions being addressed.

3. The traditional idea that human culture advances primarily by “improvisational intelligence,” the contributions of insightful, intentional, comprehending individual minds, is largely mistaken. Just as plants and animals can be the beneficiaries of brilliant design enhancements that they cannot, and need not, understand, so we human beings enjoy culturally evolved competences that far outstrip our individual comprehension. Not only do we not need to “re-invent the wheel,” we do not need to appreciate or understand the design of many human institutions, technologies, and customs that nevertheless contribute to our welfare in various ways. Moreover (a point of agreement between Sperber and Boyd, for instance), the opacity of some cultural memes (their inscrutability to human comprehension) is often an enhancement to their fitness: “This opacity—which is a matter of degree, of course—is what makes social transmission so important. It plays, I believe, a crucial role in the acceptability of cultural traits: it is, in important ways easier to trust what you don’t fully understand and hence cannot properly evaluate on its own merits.” (Sperber)

4. The persistence of cultural features that are not fitness-enhancing, and may even be fitness-reducing, is to be expected in cultural evolution, and can have a variety of explanations.

On the last I assume that by fitness-enhancing, Dennett means the biological fitness of human individuals.

I have no problems with any of these. Dennett listed six new questions, which you can investigate for yourself.

Wednesday, November 12, 2014

Spot the helicopter

Hint: It's near the center, on a border between gold and dark and flying to the right. I wasn't quite quick enough to get it entirely in the gold, which is what I was shooting for.

FWIW, when I was shooting this I had in mind the opening sequence from Ghost in the Shell 2: Innocence. It's a gorgeous film though, alas, somewhat misogynist. Yes, I know I know, the women who are slaughtered at the end aren't really women; they're robots. But they look like women and that's how the lizard brain reads them. This film reeks of sexual anger directed at women. And it's also gorgeous, just gorgeous.

Things are complicated.

Myth-Logic: St. Christopher with the Head of a Dog

It seems that in Orthodox Catholicism St. Christopher is sometimes depicted as having a dog's head. Jonathan Pageau has an interesting article about this. I won't attempt a summary or analysis, but here's a passage well into the article:

The relation of the foreign and marginal with excessive corporality, animality and disordered passions like cannibalism must be seen within a general traditional understanding of periphery. In a traditional view of the world, there is an analogy between personal and social periphery, both pictured in patristic terms as the garments of skin, those garments given to Adam and Eve which embody corporal existence. What appears at the edge of Man is analogous to what appears at the edge of the world both in spatial and temporal terms, so the barbarians, dog-headed men or other monsters on the spatial boundaries of civilization and the temporal end of civilization are akin to the death and animality which is the corporal spatial limit of an individual and the final temporal end of earthly life. The monsters as part of the garments of skin dwell on the edge of the world, and though they are dangerous, like Cerberus at the door of Hades, they also act as a kind of buffer between Man and the outer darkness. Just as our corporal bodies and its cycles are the source of our passions, they are also our “mortal shell” protecting us from death. It will therefore be by a more profound vision of the garments of skin across different ontological levels of fallen creation that we can make sense of St-Christopher[4].St-Christopher in the Bible.The relation of the Dog to periphery appears several places in the Bible. Dogs are of course an impure animal. They are seen licking the sores on Job’s skin[5]. They are excluded from the New Jerusalem[6]. They eat the body of the foreign queen Jezebel after she is thrown off the wall of the city[7]. The giant Goliath himself creates the St-Christopher dog/giant/foreigner analogy when he asks David: Am I a dog that you come at me with sticks?[8] The dog is used by Christ as a substitution for a foreigner when he tells the Samaritan that one should not give to dogs what is meant for the children[9]. The answer of the woman is also telling as she speaks of crumbs falling off the edge of the table, clearly marking the dog as the foreigner who is on the edge. Just these examples might be enough to explain St-Christopher symbolically, but there is still more.

At this point we go to stories of river crossings, not only Christopher, but various crossings in the Bible, Old and New Testaments, and then:

The New as the Remit for Ethical Criticism

As a reviewer of books she would often pan, Virginia Woolf thought one of the pleasures of reading contemporary novels was that they forced you to exercise your judgement. There was no received opinion about a book. You had to decide for yourself whether it was good. The reflection immediately poses an intriguing semantic puzzle; if, on reading a book, you enjoy it, then presumably it is good, at least as far as you are concerned. This is not something you have to “decide.” If you have to decide whether a book is good, does that mean you don’t know whether you enjoyed it or not, an odd state of affairs, or you don’t know whether your enjoyment or lack of enjoyment is an appropriate response?This sounds rather complicated, yet we know perfectly well what Woolf is talking about. A new kind of book might offer pleasures we haven’t yet learned to enjoy and deny us pleasures we were expecting. Rather than fitting in with something we are long familiar with, it is asking us to change. And how many people are genuinely open to changing their taste? Why should they be? One of the curiosities of Joyce’s Ulysses is how many reviewers and intellectuals changed their positions on the book in the ten years following its publication. Many swung from hating it to admiring it—Jung comes to mind, and the influential Parisian reviewer Louis Gillet, who went from describing it as “indigestible” and “meaningless” to congratulating Joyce on having written the great masterpiece of his time. But many others turned from adulation to suspicion. Samuel Beckett went from believing that Joyce had brought the English language back to life to wondering whether actually he wasn’t simply pursuing the old error, as Beckett had come to see it, of imagining that language could ever evoke lived experience. Faced with something new, we may take a while to arrive at a settled response.

Yes.

That, incidentally, is one of the reasons I spent a couple of years investigating manga and anime, I had to make up my own mind about the material.

Tuesday, November 11, 2014

Co-Learning and the Fluid Future, some quick remarks on the #etmooc dialog

I just watched the #etmooc conversation, The Case of #etmooc:

and have few quick remarks.

Modeling, Vygotsky, learning to learn

At one point Rhonda remarked: "...you sort of role-modeled how to do connected learning for us..." Others picked up the modeling motif from then on. As a kind of conceptual anchor, I’d like to link that to Vygtosky’s account of language learning. To be sure, he doesn’t talk of adults as modeling language for the child. Rather, he writes of the child as learning to use his own language to direct his behavior in the way that adults direct the child’s behavior through language.

He writes, in effect, of internalization. And internalization is a general mechanism. The aim of the so-called Socratic method is for the student to internalize the questioning-function that is, in effect, modeled by the teacher. When you’ve gotten the point, you no longer need Socrates to interrogate you. You can do it yourself.

And that’s also going on in one-on-one music instruction. The student needs to internalize the instructor’s ability to spot problems and provide exercises to work on them. Long after you’ve left formal lessons you still need to work on your technique, whether mere maintenance, or extending it into new areas.

At some point in the dialog Jeff used the phrase “learn to learn.” That’s crucial, ever more so in a world where skills obsolesce rapidly. Surely the best way of learning to learn is to internalize the capability of an active learner.

The Fluid Community

There was a fair amount of talk about the fluid nature of the #etmooc community. There seemed to have been a lot of drop outs (though there were probably a lot of invisible lurkers as well) and the remaining community is self-selected. Such are things. The interesting thing, though, is the community persisted beyond the formal end of a course of instruction. This, of course, could happen as #etmooc floats on the open internet rather than existing in a closed learning management system, where access to online facilities is typically shut down at the end of the course. So one also remarked that there were “so many entry points" and Alec remarked that is would be interesting to have an “ongoing precourse” so that people could enter at any time.

In other words the boundary of the course/community is fuzzy, fluid and open. What does that imply about future possibilities?

The economics of the transition from arranged marriage to love marriage in Indonesia

Gabriela Rubio of UC Merced, How Love Conquered Marriage: Theory and Evidence on the Disappearance of Arranged Marriages (PDF), abstract:

Using a large number of sources, this paper documents the sharp and continuous decline of arranged marriages (AM) around the world during the past century, and describes the factors associated with this transition. To understand these patterns, I construct and empirically test a model of marital choices that assumes that AM serve as a form of informal insurance for parents and children, whereas other forms of marriage do not. In this model, children accepting the AM will have access to insurance but might give up higher family income by constraining their geographic and social mobility. Children in love marriages (LM) are not geographically/socially constrained, so they can look for the partner with higher labor market returns, and they can have access to better remunerated occupations. The model predicts that arranged marriages disappear when the net benefits of the insurance arrangement decrease relative to the (unconstrained) returns outside of the social network. Using consumption and income panel data from the Indonesia Family Life Survey (IFLS), I show that consumption of AM households does not vary with household income (while consumption of LM households does), consistent with the model’s assumption that AM provides insurance. I then empirically test the main predictions of the model. I use the introduction of the Green Revolution (GR) in Indonesia as a quasi-experiment. First, I show that the GR increased the returns to schooling and lowered the variance of agricultural income. Then, I use a difference-in-difference identification strategy to show that cohorts exposed to the GR experienced a faster decline in AM as predicted by the theoretical framework. Second, I show the existence of increasing divorce rates among couples with AM as their insurance gains vanish. Finally, using the exogenous variation of the GR, I find that couples having an AM and exposed to the program were more likely to divorce, consistent with the hypothesis of declining relative gains of AMGabriela Rubio of UC Merced.

Monday, November 10, 2014

Apple HAS Become Big Brother

DUBLIN — It took nearly 30 years for “The Joshua Tree,” the 1987 album that was U2’s breakout ticket to megastardom, to reach 30 million people. “Songs of Innocence,” which was released on Sept. 9 as part of the unveiling of the iPhone 6, pulled that off in three weeks. Bono, the band’s leader, explained as much to the packed audience at the Web Summit here, saying that 100 million people had listened to a song or two and that 30 million people had listened to the whole album.It was not without costs, even though it was given away. The album was pushed onto the playlists of some 500 million iTunes users, and Apple and U2 ended up in stockades on the web for what many consumers saw as an unwanted intrusion into their most personal territory — their music collection.Enda Kenny, Ireland's prime minister, rang in the first day of the Web Summit, a gathering of tech and media industries, in Dublin on Nov. 4.Ireland Vies to Remain Silicon Valley’s Low-Tax Home Away From HomeNOV. 9, 2014As a mushroom cloud of discontent erupted, Bono engaged in what sounded like contrition in a video posted on the band’s Facebook page as part of a Q. and A. with fans. One of them asked why U2 thought they could embed themselves in people’s phones without so much as a how-do-you-do.

What WERE they thinking? That they OWN us?

Back in 1984, in one of the best-known and best TV advertisements ever, Apple introduced the MacIntosh by pitching itself as a rebellious upstart against IBM as dictatorial megacorp:

Now who's the hegemonic megacorp?

Of course, it's not simply Apple that's at fault here. U2 is hardly an innocent bystander in this fiasco. Just why do they think they've got the right to push themselves down our web-enabled throats?

Subscribe to:

Posts (Atom)