Wednesday, December 31, 2014

Lee Quiñones interview in Vulture

Matthew Giles interviews Lee Quiñones in Vulture:

Of your work that you’ve created, which did you feel was the most important?

The "Howard the Duck" handball wall mural, which I created in 1978 and was the first of its kind. Basically that mural broke open the conversation of what this art movement was capable of and the trajectory it was pointed in. I felt that I had arrived as an artist at that point. "The Lion’s Den" mural was behind it. I loved the "Lion" mural more than Howard, because it was when I felt I brought the technique of spray-painting to the next level. Instead of outlining things in a cartoonish way, I was working with stories of light and depth and feel. Both were a neighborhood prescription.

When you say "neighborhood prescription," what does that mean?

The Lower East Side is an unsung hero itself. A lot of talents came out of there, but it is always at the back of the bus compared to the rest of the city. I felt the neighborhood was hurting and wanted something refreshing to look forward to.

An influence I wouldn't have expected:

As you were developing your talent, what other NYC pieces were you looking to for inspiration?

Jenny Holzer. Her writings on the wall were instrumental. In the 1980s, in NYC, there was an abundance of abandoned buildings and walls. The presence of all those empty buildings became almost normal to some people, but when you saw something painted on a wall as crude as the phrase "Broken Promises" or "Sense of Power Is Domination," it gave a whole new purpose to the climate and the actual buildings. That was riveting to me. Jenny Holzer makes me feel so illiterate and stupid when I read her stuff. I am a visual person, and when I am down and out in my work, I always look forward to her work. Her work is like she is throwing a huge party on the street that no one is ever late to.

On Banksey, Street Art, and Greed:

For the past decade, there has been so much attention focused on street art. What did you think of Banksy's NYC residency?

I love a lot of Banksy’s work. I was really busy when it was going on and I wasn't trying to get on that herd drive, but I saw some pieces later on and thought they were genius. They were hilarious and brought up some real issues. Giving away drawings for $6, he is having a laugh at the hypocrisy of how people are in a crazy rush to buy and own something. Me, me, me. Art is a powerful tool, and right now street art is more than ever economic and a tool for planting a flag when talking about displacement and replacement. When you see street art coming up in a neighborhood, you know that will be the next neighborhood.

It’s interesting about the owning of street art. In that HBO Banksy documentary, people were taking the pieces almost immediately as they were being "discovered." It’s the start of a nasty fire. It’s all about personal greed.

Tuesday, December 30, 2014

The fortunes of Shakespeare in a post-Theory world

David Womersley in Standpoint Magazine:

Although the fortunes of theory as a practice waned, its impact was lasting. In particular, critics who were not in thrall to theory nevertheless showed little appetite to revive the ethical criticism upon the rubble of which the theoreticians had erected their own, brief, period of authority. Post-theory, criticism of Shakespeare moved in three main directions. First, there was a turn to history, in the form of the "New Historicism". Historically-grounded readings of the plays might revive the flavour of the old ethical criticism, without being so vulnerable to the powerful corrosives which theory had used so destructively on that earlier school. Second, there was a revival of interest in theatre history, and in positioning Shakespeare within the dramatic archive. Third, there was a resurgence of interest in authorship studies, particularly in the phenomenon of collaborative composition. These developments all marked at once an advance and a retreat. They showed an impressive gain in various forms of technical power and accomplishment (historical contextualisation, early modern theatrical institutions, textual analysis of authorship). At the same time, however, they revealed the academy turning in on itself and retreating further from the possibility of addressing a general educated readership.

The contrasting social backdrop to these ultimately mandarin movements in Shakespeare criticism is the extraordinary phenomenon of worldwide attendance at performances of Shakespeare's plays. For theory, reliant as it was on a constructivist account of human nature and hostile to any idea of essence, the fact of Shakespeare's popularity was easily explained away as a simple consequence of the massive "Shakespeare Establishment". No doubt the entrenched position of Shakespeare in the school curriculum and the existence of so culturally potent an entity as the Royal Shakespeare Company both exert an influence, at least in Great Britain. But enthusiasm for Shakespeare is not confined to the West, and indeed flourishes in cultures where no "Shakespeare Establishment" exists. The recent critical preoccupations of the academy-historical explication, theatrical antiquarianism, and authorship studies — may of course yield important findings. But they will always be "second-order" findings. These critical modes cannot in their own terms find a way of addressing — let alone of explaining — the vast, primary fact of the enduring human appetite for Shakespeare's drama.

When it comes to explaining Shakespeare's enduring appeal, the predominant answer survives http://t.co/UXM0EcU8v3

— Arts & Letters Daily (@aldaily) December 30, 2014

42 Quarks: Getting from here to there

I've written 14 essays for 3 Quarks Daily over the past year. Now I've bundled them into a PDF, which you can download here:

Here's the introduction:

0. Introduction: here & there

Fourteen essays in a bit over a year. The first one appeared on Monday 16 December 2013 and the most recent one on Monday 15 December 2094. My basic routine is simple. During the week before publication I’d get an email from the editor, S. Abbas Raza, reminding me that I had an essay due on coming Monday. I’d start thinking about it and by Friday or so I would begin writing. I’d finish up mid-day on Sunday, upload the essay, and tell Abbas I was good to go.

That was the basic routine, a skeleton. One time Abbas forgot to email us, but I was working on a piece anyhow. Another time I started writing a week ahead of time and sent through six drafts. Just variations on the basic procedure, which went like clockwork, albeit the clock was not quite mechanically perfect.

Not so what went on inside my head. That was irregular. Despite the fact that I’ve been blogging for a while now, and am used to producing frequent long-form posts, writing for 3 Quarks Daily is different. The audience is different.

My blog, New Savanna, is just that, MY blog. I write whatever I want to & post whatever photos. To some extent it’s an extension of my notebooks – in fact my note keeping has all but disappeared into my blogging. While New Savanna is fully public in the sense that anyone on the web can read it, I don’t treat it as a public activity. It’s more like a private salon. I assume I am writing for people who have been following me for a while.

3 Quarks Daily IS public, though public in a distinctly intellectual way. Since I appear there only once every four weeks I do not assume I am writing for an audience that follows me. I am writing for an audience that follows 3QD.

Of the things I know, what would, or should – a different slant, no? interest the 3QD audience? Pondering that question makes writing for 3QD a bit different, a bit more strenuous than writing for New Savanna.

One way trip to Mars has 200,000 takers

From the NYTimes of December 8, 2014:

Where NASA-style flight plans are designed on the Apollo moonshot model of round-trip tickets, the “one” in Mars One means, starkly, one way. To make the project feasible and affordable, the founders say, there can be no coming back to Earth. Would-be Mars pilgrims must count on living, and dying, some 140 million miles from the splendid blue marble that all humans before them called home.Nevertheless, enthusiasm for the Mars One scheme has been of middle-school proportions. Last year, the outfit announced that it was seeking potential colonists and that anybody over age 18 could apply, advanced degrees or no. Among the few stipulations: Candidates must be between 5-foot-2 and 6-foot-2, have a ready sense of humor and be “Olympians of tolerance.” More than 200,000 people from dozens of countries applied. Mars One managers have since whittled the pool to some 660 semifinalists.

Why go?

H/t 3 Quarks Daily.The various reasons offered for sending humans to Mars, at a cost of billions if not hundreds of billions of dollars — “but less expensive than the war in Iraq!” insisted Andrew Rader, a Mars One candidate and expert in human spaceflight with a doctorate from M.I.T. — include elements both practical and profound, optimistic and dystopian. Ellen Stofan, NASA’s chief scientist, said that for all the success of robots like Curiosity, sending humans to the surface “may be the only way to prove life evolved on Mars and what the nature of it is.” And demonstrating that some form of life arose at least twice in our solar system would lend ballast to the argument that the universe teems with life. Humans will soon need more space and more resources than Earth can offer, Dr. Shostak said, adding, “If you want to have Homo sapiens for the long run, you have to move out somewhere.” Whatever hardships the Mars homesteaders endure, Mr. Lansdorp argued, may well improve life for those back on Earth. “We’re a species that explores and pushes boundaries,” he said. “By exploring our own planet, we’ve developed technology to make our life more comfortable. Mars is the next logical step, the boundary to push, to make us more developed still.”

Monday, December 29, 2014

Tires in a wall with paint

I'm thinking of taking a short break, just until the new year. Let the brain chillax. No guarantees, mind you, and I may post some flix, 'cause I've got thousands of them, it doesn't require that much thought. Took this one over the weekend when I was in Philadelphia. It's some new construction at the FDR SK8 Park.

Sunday, December 28, 2014

Materials for a New Mythology: 2015 here I come!

So far I’ve got:

- The Anthropocene and the end of the world

- The prospect of a permanent human presence in space: the Moon, asteroids, and Mars

- Human cultural evolution

- Graffiti

Let’s start from the last one, graffiti. It’s a transnational cultural language that arose only quite recently in human history. And it arose at about the time humans first set foot on the Moon. I figure that if an advanced alien civilization is watching us, trying to figure out whether or not we should be admitted to The Club, they’re going to use the state of graffiti and street art as a prime index.

Human cultural evolution is what pulled us pushed us from the animal world. It’s been going on for centuries, even millennia. But not much longer. It’s only recently that cultural evolution has been recognized as such and that recognition has been fitful at best. These days there’s been a resurgence of interest, but that resurgence hasn’t quite gotten on to the idea that human culture has progressed, that it’s directional – though there is Robert Wright’s Nonzero.

Of course, I see graffiti in the light of cultural evolution, as the fulcrum on which we’ll lever the next era. And the next era will find us in space? I’m still pondering that one. Seriously pondering.

But it would make a kind of sense to go there on the cusp of the Anthropocene, after the end of the world, as Tim Morton would have it. The Anthropocene, of course, is a geological era named after the human presence of earth. It’s now been so significant has to affected earth’s trajectory at the geophysical level. That being so, perhaps it IS time we take to the stars, or at least the Moon, asteroids, Mars, and then the solar system!

That’s going to require one hell of a mythology to make it real. Looks like I’ve got my work cut out for me in the coming year.

* * * * *

I almost forgot! There is this: Tight Like This: A Tale of High Adventure in Ancient Nubia. Yes indeedy do! It's going to take lots of jivometric mind jolts.

Saturday, December 27, 2014

Slavoj Žižek, a Note

I've read almost nothing by the man. Yes, I understand that he's a major thinker in some pretty large intellectual circles. Alas, I can't help but regarding him as a last gasp in a Continental intellectual tradition that peaked in middle of the previous century. Just what's going to become of that tradition in this, the 21st century CE, I do not know. I suppose something will keep going on as a kind of alchemy astrology theosophical New Age critique add-on. But we need a more vital interpretive tradition.

Anyhow, Žižek was interviewed at the U. of Chicago by Kerry Chance some time after 2006 [there's no date on the interview, but Žižek mentions The Parallax View (2006) as his previous book]. Concerning the cognitive sciences:

. . . they should be taken seriously. They should not be dismissed as just another naive, naturalizing, positivist approach. The question should be seriously asked, how do they compel us to redefine the most basic notions of human dignity, freedom? That is to say, what we experience as dignity and freedom is it all just an illusion, as they put it in computer user terms, a user's illusion. . . .

The thing to do - and I'm not saying I did it, I'm saying I am trying to do it - is to take these sciences very seriously, and find a point in them where there is a need for an intervention of concepts developed by psychoanalysis. I think - I hope - that I isolated one such point. I noticed how, when they tried to account for consciousness, they all have to resort to almost always the same metaphor of this autopoesis, self-reflexive move, some kind of self-relating, self-referring closed circuit. They are only able to describe it metaphorically. What I claim is that this is what Freud meant by death drive and so on.

But it's not that we psychoanalysts know it and can teach the idiots. I think this is also good for us - and by us I mean, my gang of psychoanalytically oriented people. It compels us also to formulate our terminology, to purify our technology as it were.

I can't say that I find the cognitive sciences particularly interesting on human dignity and freedom. It's interesting that Žižek chooses this as a point of contact while saying nothing about what the cognitive sciences have to say about perceptual and cognitive mechanisms and structures.

But who's going to develop a discourse of freedom and dignity in the context of the computational sophistication of the cognitive sciences? What would that look like?

Friday, December 26, 2014

Friday Fotos: The Beach, threshold to a different world?

We're at the threshold of a new year, riding the tipping point into a new era. Beaches are thresholds between land and sea, and much else. This is the beach at the lower end of Liberty State Park in the Upper New York Bay on the threshold of the Hudson river.

Is Mars too expensive? Is that the main point?

For more than a century now, the fourth planet from the sun has drawn intense interest from those of us on the third. We viewed it, first, as a place where life and intelligence might flourish. The mistaken identification of artificial water channels on its surface in the late 19th century seemed to prove that they did. More recently, terrestrials have gazed at the arid, cratered, wind-swept landscape and seen a world worth traveling to. With increasingly intense longing, we’ve now begun to think of it as a newfound land that men and women can settle and colonize. It’s the only planet in the solar system—rocky, almost temperate, and relatively close—where something like that can be conceived of as remotely plausible.

Now that I've decided to think about spaceflight, I need to consider such matters:

But human beings won’t be going to Mars anytime soon, if ever. In June, a congressionally commissioned report by the National Research Council [Pathways to Exploration: Rationales and Approaches to a U.S. Program of Human Space Exploration], an arm of the National Academy of Sciences and the National Academy of Engineering, punctured any hope that with its current and anticipated level of funding NASA will get human beings anywhere within the vicinity of the red planet. To continue on a course for Mars without a sustained increase in the budget, the report said, “is to invite failure, disillusionment, and the loss of the longstanding international perception that human spaceflight is something the United States does best.”

The new report warns against making dates with Mars we cannot keep. It endorses a human mission to the red planet, but only mildly and without setting a firm timetable. Its “pathways” approach comprises intermediate missions, such as a return to the moon or a visit to an asteroid. No intermediate mission would be embarked upon without a budgetary commitment to complete it; each step would lead to the next. Each could conclude the human exploration of space if future Congresses and presidential administrations decide the technical and budgetary challenges for a flight to Mars are too steep.

Yes, going to Mars will be fiercely expensive. And though the US is still the richest nation on earth – though I've heard (but have no citation available) that China now has the world's largest economy by some measure – I don't think the should fund it alone. If humankind is going to Mars, humankind will fund it. If it's worth doing at all, it's too important to become another point of honor in a hunt for nationalist glory.

But will the US fund such a venture if it isn't construed as a matter of national honor? Does that matter, given the club of billionaires who are actively funding space projects? What about other nations, as the US isn't the only one with designs on space travel?

Thursday, December 25, 2014

How I’m Going to Spend Christmas Day

I’m going out for dinner with friends, and new friends at that, so that will be good. But between now – 8:30AM – and then, how would I know?

I’ve got a number of writing projects I’m working on, some that are near completion: the compilation of my 3 Quarks Daily essays, my series on the nature and direction of cultural evolution, and my essay on bell rhythms and spirits, based on this working paper (this one’s going into a special issue of an academic journal). And I really should get cracking on my (contemplated) series about space travel, perhaps with an essay on whether or not outer space is real. Oh, I know it’s real, I’ve seen the pictures. But I mean really real, reality that you can smell and touch. Can we make it THAT real? How? And I’m itching to do another piece on graffiti, one arguing from historical precedent, that it’s the cultural tipping point to the next phase in human cultural evolution. I’ve started blogging my way through danah boyd’s It’s complicated; will I finish that? I really want to do more on cultural ranks and on cultural identity as well. What about the working paper on Porky in Wackyland or the one on The Greatest Man in Siam? And then there’s Goethe’s Faust, which I’ve just barely begun to read.

Yikes!

There’s no end of things I could do between now at a lovely evening. But at the moment I’m thinking about not doing any of them. I think I just want to mull things over. By all means, look those projects over, maybe make some edits here and there. But no push to finish any of them.

Maybe I’ll take a walk along the river. That’s always nice. Perhaps I’ll even take some photos. Though that’s getting dangerously close to doing something. I mean, if I take the photos, them I’m going to want to develop them. That’s not quite like writing a project to the end. But, no, better not do that.

We’ll see.

Happy Holiday!

Wednesday, December 24, 2014

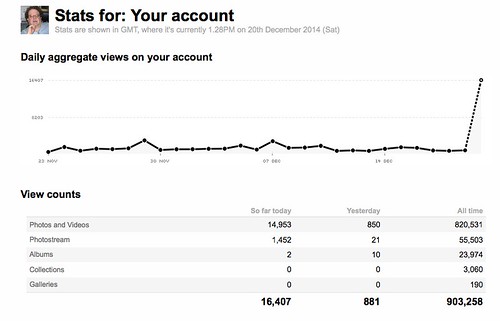

Flickr Spike, Why?

On Friday night, December 19, I had a big spike in traffic to my Flickr page:

I took that screen shot at 8:31 AM Saturday morning (the 20th). I’d had 16,407 views since 7 PM the might before. These days I’m running, on average, over 1000 views a day. So that’s way high, as you can see.

A Note on Time: Flickr time-stamps its viewing data with Greenwich Mean Time, which runs five hours ahead of Eastern Daylight Time, which is what I’m on. So, when Flickr’s clock rolls over from 12:59 PM on December 19 to 00:00 AM on December 20, it’s going from 6:59 PM to 7:00 PM on December 19 for me.

I’ve never had a run like that before. I believe the most single-day views I’ve had before this spike was somewhere between 7000 and 8000 the day I posted my photographs of the damage Hurricane Sandy left in my neighborhood of Jersey City.

For the Historical Record: Herbert Simon on Literary Criticism

Some time ago I was cruising through the blogosphere and came across a post at Mixing Memory entitled Cognitive Science and Literary Criticism (from 2004! almost the Jurassic era). The post summarized a decade-old article by Herbert Simon, AI pioneer and Nobel Laureate in economics. The article had appeared in The Standford Humanities Review in a did a special issue on cognitive science and literary criticism. After Simon had his say some 30+ folks from a number of disciplines, including Kathleen Hayles, Norm Holland, Mark Turner, and Hubert Dreyfus, made comments.

Simon expresses his intentions thus:

. . . it is not my aspiration to create a new school of critical theory. Rather, I hope to cast some light on the relations among existing doctrines by reinterpreting them in a language that can lend to them a precision that they seldom seem to possess in contemporary literary discussion. Familiar terms like "meaning," "context," "evocation," "recognition," and "image" have gained a clarity from the researches of contemporary cognitive science that they did not have in earlier writing and still do not have in literary criticism and its theory. I will try to introduce some of that precision, divorced as far as possible from technicalities, into the discussion.

That will not be easy, for I will not be using the key terms in their ordinary senses, but in senses dependent upon a theoretical framework and formal language that I can set forth here only in broad outline. Focusing on the term "meaning" and how that term is interpreted in contemporary cognitive science will concentrate most of the technicalities and difficulties in one place. Much of the rest of the conversation can be carried on in ordinary language. If what I say sounds like common sense, so much the better.

I rather wish I could give Simon's article a strong endorsement. I like cognitive science and think literary scholars need to know about it. Simon is a brilliant man, and his Sciences of the Artificial deserves a place in the "general knowledge" portion of one's library. But the article has not had much influence among literary scholars pursuing "the cognitive turn" (BTW, who is responsible for that trope, the X turn?) and I am reluctant to lay the blame on the literary scholars. Chris, the proprietor of Mixing Memory, observes:

. . . Simon's conception of meaning isn't going to do anyone, much less literary critics, much good. If it were only that his description of the cognitive scientific view of meaning was an oversimplification, I think that would be OK. It's a short paper, and cognitive science has a lot to say about memory. However, I think oversimplicity is not the only problem. The paper's account of meaning is just wrong. It's wrong in how it describes memory (which is probably more case-based, more reconstructive, and much less encyclopedic than he would lead us to believe), and I think he pays far too little attention (none, in most cases) to things like inference, imagery, layers of meaning, and the role of creativity in extending meaning.

That may explain it.

Tuesday, December 23, 2014

Of drugs and medicine: When magic became science

Benjamin Breen writing in Aeon:

When I began my graduate studies in history, I decided to focus on the period when magic and alchemy morphed into modern science. I was especially fascinated by John Dee, the wizardly court astrologer to Queen Elizabeth I. Although Dee believed he could speak to angels, he was also one of the leading mathematicians and geographers of his era. Robert Boyle and Isaac Newton followed in Dee’s footsteps, conducting empirical investigations of nature alongside studies of Biblical prophecy and alchemical secrets. John Maynard Keynes had it right when he observed in 1946 that Newton was not the first scientist – he was the last of the magicians. Newton’s generation especially loved to search for ‘occult virtues’ – hidden phenomena latent in nature – and they found them in psychoactive drugs, along with a mystery that is still with us today.

These substances had far-reaching entanglements:

Psychoactive drugs thus stood at the centre of debates about imperialism, religion, and globalisation, as well as science. They still do. It’s not a coincidence that drug cartels are among the most successful multinational enterprises of the 21st century – or that a global crusade against drugs, both prescription and illicit, is one of the core tenets of our era’s most successful new religion, the Church of Scientology.

Though this might be stretching it a bit:

It would not be a stretch to say that the wave of stimulants, intoxicants and narcotics that followed in the wake of Christopher Columbus helped to create modernity as we know it. From coffee, tea and chocolate to Adderall, painkillers and cocaine, and alternative remedies such as homeopathy and ginseng, consuming drugs stands at the centre of what it is to be a modern consumer.

Note how old cookbooks have recipes all over the place, as though the preparation of mere food hadn't yet coalesced into a coherent category or procedures:

The cookbooks at Penn were a treasure trove. I found everything from ‘a Cake my Lady Oxford’s way’ that involved mixing cream with strong Spanish wine, to a salmon recipe featuring ‘water and salt and stale beer’. Most surprising, I found that recipes for medicinal drugs and foods were intermingled: a recipe for chicken pot pie circa 1700 appeared alongside ‘Snaill water, for a consumption’ that called for (you guessed it) a large amount of crushed snails, mixed with oddities such as ivory shavings and ‘red cows milk’, to be drunk every morning until the consumption, ie tuberculosis, subsided.

Gradisil, SF, and Sacred Hunger

Since I've begun re=thinking space travel I think it's time to dust off this old review of Adam Roberts' Gradisil. I published it in The Valve on July 11, 2006 and Adam replied on July 13. The interesting thing about Adam's reply is that he argued, contra a statement in my review, that "SF killed the space race, I fear."

There's some interesting discussion to both posts. I've appended one of my comments – the major one – to Adam's reply.

* * * * *

A few weeks ago I decided it was time to a novel by our own Adam Roberts. So I went over to his website, looked around a bit, a decided to order his latest, Gradisil. It arrived in due course and, in due course, I read it and decided to engage Adam on it right here on The Valve.

But how to do that? It would be easy enough to write a review, said I to myself, but that would put Adam in a rhetorically awkward situation no matter how the review came out. So I opted for a different approach, and emailed him about it.

Here’s what I’m doing. First I’m going to say a little about the book, quoting from a description Adam’s posted at his web site. Then I’m going to write him a letter, here in public. I rather like the idea of addressing my remarks directly to him rather than to some generalized Other.

Others should feel free to join in the conversation.

Gradisil, the book

Adam described his basic intentions thus:

I wanted to write a ‘space opera’ in the Heinlein or Stephen Baxter mode, inflected through my particular slant on these things. I wanted to write a fairly expansive book, the background-narrative of which would be the birth of a nation, the first century or so of the colonisation of space. I wanted to model this on the birth of actual nations, rather than on some purely notional ‘shipload of colonists uploaded into a new place’. How have new countries actually been populated in Earth’s history? They’ve been populated bottom-up, not top-down: by ordinary people, usually poor people, shipping themselves into the country, displacing the aboriginal peoples (often violently), taking the land themselves. I don't know any SF novels that dramatise that process.

And so it is, a space opera, spanning four generations. The central figure, Gradisil, is in the third generation. She’s a political genius who forges the Uplands into (something rather like) a nation. She’s single-minded, manipulative, and neglects her family.

The Uplands? you ask? By the middle of this century it had become possible for private individuals not only to fly into earth orbit, but to set up residence there. [This due to a new electromagnetic mode of propulsion, which Adam describes in brief, without making a big deal of it. It’s not that kind of SF book.] While not cheap, it wasn’t so outrageously expensive that one had to be a Richard Branson or a Paul Allen to be able to pay the freight. With time, daring, and a bit of disposable cash, tens, then hundreds, and even thousands of people had established residence – some of them 24/7 365 – in earth orbit. This zone of habitation became known as the Uplands.

Nor does the space-opera intention preclude a touch of post-modern meta-tude. We learn that at least part of the narrative has been vetted by lawyers – so just how reliable can that narrative be? And there’s the slightly odd, but transparent spellings (e.g. “bak” for “back”), and the ligature binding “ng” into a single character. You can neither forget that this story is inscribed in language nor can you get lost in the intricacies of such inscription.

Extended Cognition and the Collective Mind

Consider this to be an addendum to yesterdays' post What is Culture that it can Evolve? The Mesh, from Individuals to the Group.

I was cruising the web this morning (23 December 2014) and came across a post about extended cognition (Carl Pierer, Extended Cognition (Part 1) in 3 Quarks Daily).

Extended cognition is the notion that, to quote the post, “the tools

and instruments used in cognitive processes are part of the cognitive

process.” Hence cognition isn’t confined to brain within one’s skull.

I’ve

been aware of this notion for some time. I take it as self-evident

that, for example, written language is, in some useful sense, a tool of

cognition and that that is therefore an example of a cognitive process

that is in some sense extended outside the skull. There are a lot of

things we use in a way similar to written language. Obviously, we have

numbers and the system of calculations and we have diagrams and drawings

of all sorts. Just how far we can extend this process, I don’t know. To

computers? Sure, why not?

But

it’s never been immediately obvious to me that anything deep depends on

getting the matter right, whatever it is. The issue seems to me to be

one of boundary drawing, but it seems to me that the important question

about cognition has always been: What happens inside the skull? When we

use computers to simulate cognitive processes, that’s what we’re doing,

trying to figure out what happens inside a person’s skull. I see no

reason to change this view.

What

happens to this conversation, however, in the context of cultural

evolution as I have been discussing it? In that context I’ve been

talking about a “collective mind” that is implemented in “the mesh” of

individual communicating humans. How does that conception intersect with

the thesis of extended cognition? Consider this passage from Pierer’s

3QD post:

On Clark and Chalmers' view, Wolfram and the computer create a “coupled system”:

All the components in the system play an active causal role, and hey jointly govern behaviour in the same sort of way that cognition usually does. If we remove the external component the system's behavioural competence will drop, just as it would if we removed part of its brain. Our thesis is that this sort of coupled process counts equally well as a cognitive process, whether or not it is wholly in the head. (Clark & Chalmers 1998, p. 8)Coupled systems are thus ubiquitous: the person using their smartphone to find the nearest bus stop, the pianist playing the piano to test their new piece of music as well as the writer jotting down ideas and modifying them in the process all constitute coupled systems.

The argument for extended heavily relies on an assumption known as the parity principle:

If, as we confront some task, a part of the world functions as a process which, were it done in the head, we would have no hesitation in recognizing as part of the cognitive process, then that part of the world is (so we claim) part of the cognitive process.This principle derives from the idea that it should not matter how exactly a cognitive or mental process is instantiated for it to count as cognitive or mental.

Monday, December 22, 2014

What is Culture that it can Evolve? The Mesh, from Individuals to the Group

Things are complicated, and there’s a sense in which I’ve jumped the gun in some of my earlier posts in this current series, which I’ve provisionally titled “Cultural Evolution: Literary History, Popular Music, Ontology, and Temporality.” So I want to do a little catch-up in this post before a final – I hope – post in which I return to the idea that cultural evolution is driven by the need to assuage anxiety, an idea I have from David Hays and which I introduced into this series in the post, Culture as a Force in History: the United States of the Blues.

What I would like to do in this post is to be more explicit about how we get from a collection of individuals to a culturally coherent group, from individual minds to a “collective” mind in the meshwork of individuals held together by a common culture. From there I will give a more detailed account of the coordinators in the cultural evolutionary process, for it is the coordinators that make it possible. Then we’ll be ready for an explicit account of pleasure and anxiety. I’ll conclude with some remarks on the need for cultural stability before we can have cultural evolution.

Note: Most of the rest of this post is edited from materials I’ve published previously, either in Beethoven’s Anvil (chapters 3 and 4) or my working paper, Cultural Evolution, Memes, and the Trouble with Dan Dennett.

Coupling, Music and the Mesh

How then do we go about constructing a physically coherent account of a collective mind? What I did in the second and third chapters of Beethoven’s Anvil was to argue that when a group of people is engaged in making music together, and/or dancing together, that they are functioning as a collective mind. In this collective mind most of the signal pathways are inside brains and bodies and transmit signals electro-chemically. But some of the signal pathways exist between individuals, where the signals are transmitted as mechanical waves through the air. The nature of these two sets of signals – their speed, their content – must be such that the overall ensemble functions smoothly.

Note that when people are doing this, each gives up most of his or her individual freedom for the duration of the activity. If they are playing completely notated music, such as musicians in a symphony orchestra, then they agree to play what is written in the score. They also agree to follow the indications of the conductor and to coordinate their actions with their fellow musicians. If they are improvising jazz musicians, they aren’t committed to a specific score, though a given arrangement is likely to have some specified melodic lines and back up “riffs”, but they agree to the general conventions to be followed in each piece and they agree to be responsive to one another in the group.

This may seem obvious and self-evident, but it is this self-evident cooperation that allows us to treat the group as embodying a single coherent “mind.” There is only one source of “free” agency, and that is the group. Music is such a subtle business that, despite all this cooperation, the individuals still have quite a lot to keep them busy.

That’s the easy part of the argument. But I did something else, something more abstract and more subtle. I called on the neurobiology of Walter Freeman. Freeman uses the mathematics of complexity theory to study neural activity. That mathematics was originally developed by nineteenth century physicists to study thermodynamics.

How the Victorians Invented the Future

Before the beginning of the 19th century, the future was only rarely portrayed as a very different place from the present. The social order, like the natural order, was supposed to be static, with everything in its proper place: as it had been, so it would be. When Sir Isaac Newton thought about the future, he worried about the exact date of Armageddon, not about how his science might change the world. Even Enlightenment revolutionaries usually argued that what they were doing was restoring the proper order of things, not creating a new world order.

It was only around the beginning of the 1800s, as new attitudes towards progress, shaped by the relationship between technology and society, started coming together, that people started thinking about the future as a different place, or an undiscovered country – an idea that seems so familiar to us now that we often forget how peculiar it actually is.

The new technology of electricity seemed to be made for futuristic speculation. At exhibition halls in London, such as the Adelaide Gallery or the Royal Polytechnic Institution, early Victorians could marvel at electrical engines that promised to transform travel. Inventors boasted that ‘half a barrel of blue vitriol [copper sulphate] and a hogshead or two of water, would send a ship from New York to Liverpool’.

And they invented science fiction:

Just as they invented the future, the Victorians also invented the way we continue to talk about the future. Their prophets created stories about the world to come that blended technoscientific fact with fiction. When we listen to Elon Musk describing his hyperloop high-speed transportation system, or his plans to colonise Mars, we’re listening to a view of the future put together according to a Victorian rulebook. Built into this ‘futurism’ is the Victorian discovery that societies and their technologies evolve together: from this perspective, technology just is social progress.

H. G. Wells saw connections between technology and society:

Sunday, December 21, 2014

One tourist in three is a pilgrim, 330M annually

"Pilgrimage is not merely ancillary to the modern spiritual existence," writes Bruce Feiler in The New York Times:

In an age of doubt and shifting beliefs, people are no longer willing to blindly accept the beliefs of their ancestors. They are insisting instead on choosing their own beliefs. A pilgrimage can be a central part of this effort.

He goes on to assert that "religious identity is more fluid these days" and that taking a pilgrimage is one facet of choosing a religious identity rather than simply continuing in your parents' religious tradition. "Half of Americans have changed their religion at least once; one in four is in an interfaith marriage."

Suffering and deprivation seem to be important components of many pilgrimages. A pilgrimage is not a vacation. When you take a vacation, even to a foreign land, you intend to return to your station in life with a sense of renewal. When you go on a pilgrimage you intend to change your station in life. Changing your station in life is not easy and pleasant:

Often the food is bad, the accommodations uncomfortable, the weather unpleasant. Traveling in congested places, with little sleep and upset stomachs, is taxing...To go on a pilgrimage is to enter a heightened place where emotions soar, but sometimes dip.

To change your station in life you have to break things. Once things are broken, you can put them together. And if the things being broken are your ties and attachments, then the YOU that puts them together is a new self, or the core around which one can be built:

It’s that feeling of taking control over one’s life that most affected the pilgrims I met. So much of religion as it’s been practiced for centuries has been largely passive. People receive a faith from their parents; they are herded into institutions they have no role in choosing; they spend much of their spiritual lives sitting inactively in buildings being lectured at from on high.

A pilgrimage reverses all of that. At its core, it’s a gesture of action. In a world in which more and more things are artificial and ephemeral, a sacred journey gives the pilgrim the chance to experience something both physical and real. And it provides seekers with an opportunity they may never have had: to confront their doubts and decide for themselves what they really believe.

Saturday, December 20, 2014

Sampling the Space

This is a more or less neutral version of what came out of the camera. Since it's one of my shaky-cam photos there's no point in trying to develop the photo so it looks like what I was when I took the picture. What I saw was lights at night. What I did was shake the camera on a long exposure. So this is what the camera saw, more or less:

These other photos sample the space of Photoshop possibilities. Actually, it's a somewhat smaller space than what Photoshop affords, but I don't know how to specify that smaller space except by presenting a sample of the images in it.

Christmas Music

There is the bag of music that exists in my mind as Christmas Music. All the turns run together, some more frequently than others, in a big lump, like a ball of yarn:

Oh Little Town of Three Kings Roasting Frosty the Red-Nosed White Christmas Wonderland Deck the Halls Silent Night Feliz Merry Gentlemen Hark the Messiah Favorite Things Joy to the World.

What’s interesting is that it’s a fairly closed group of tunes and, for the most part, it only floats through my mind during the Christmas season. If for whatever reason one of these tunes should occur to me at some other time of year, then the rest will come trailing along. You pull on one thread from the ball, and you get all the yarn.

The seasonal nature of the music, of course, is not something I’ve invented. It’s a fact of life in America, and has been for some time. Not so long after Thanksgiving those songs start showing up on the radio, on television, and on the streets. And they attract near everything into the same ball of seasonal yarn.

There’s nothing else quite so, so pervasive. There are other holidays, ones important enough to get a day or two off from work: Thanksgiving, Memorial Day, Labor Day, 4th of July, Easter, and so forth. But Christmas is the only one that’s so pervasive.

I suppose that’s because it somehow – there’s a history here and I’m sure you can find it on the web – tied up with gift giving. If you’re going to give gifts, then you have to have gifts to give. Most likely you’ll purchase those gifts. So Christmas is good for business.

It’s the only holiday like that. And so it gets a bad rap for being commercialized. It’s not a real holiday. It’s a fake holiday captive capitalist greed and graft. The complaints too are now traditional. It’s part of the same ball of yarn. And why not?

It’s that time of year. In a few days we’ll all pull on the yarn and then ball will unravel. Relax. Because on January 1st you’re going to start gathering it up again.

Round and round and round.

Friday, December 19, 2014

Evolution in the Universe: It's Physics

And this:From the standpoint of physics, there is one essential difference between living things and inanimate clumps of carbon atoms: The former tend to be much better at capturing energy from their environment and dissipating that energy as heat. Jeremy England, a 31-year-old assistant professor at the Massachusetts Institute of Technology, has derived a mathematical formula that he believes explains this capacity. The formula, based on established physics, indicates that when a group of atoms is driven by an external source of energy (like the sun or chemical fuel) and surrounded by a heat bath (like the ocean or atmosphere), it will often gradually restructure itself in order to dissipate increasingly more energy. This could mean that under certain conditions, matter inexorably acquires the key physical attribute associated with life.“You start with a random clump of atoms, and if you shine light on it for long enough, it should not be so surprising that you get a plant,” England said.England’s theory is meant to underlie, rather than replace, Darwin’s theory of evolution by natural selection, which provides a powerful description of life at the level of genes and populations.

“He is making me think that the distinction between living and nonliving matter is not sharp,” said Carl Franck, a biological physicist at Cornell University, in an email. “I’m particularly impressed by this notion when one considers systems as small as chemical circuits involving a few biomolecules.”

Thursday, December 18, 2014

Pedagogical Affordance and Entrepreneurial Action: A Framework for Thinking about Connected Learning

This working paper consists of a series of posts written for an online workshop in connected learning: Connected Courses. It was conducted in the Fall of 2014 and was sponsored by the DML Hub, as part of the MacArthur Foundation, and the University of California Humanities Research Institute. Course URL:

You can download the PDF at this link: https://www.academia.edu/9727654/Pedagogical_Affordance_and_Entrepreneurial_Action_A_Framework_for_Thinking_about_Connected_Learning

Contents

- Introduction: Pedagogical Affordance, Entrepreneurial Action?

- Vygotsky Tutorial

- Pedagogical Styles 1: Coaching and Midwifery

- Pedagogical Styles 2: Lectures, and beyond…

- Learning to Learn and Co-learning, NOW

- Co-learning Models: Meta-Cognition, Democratic Schools, and Entrepreneurs

- Pedagogical Styles 3: Courses I have taught (or taken)

- “VICTORY IS MINE!” – Active engagement with computing in connected learning

- Connected from Birth: You, Your Double, and Cyberspace

- Education, not just for the young

- Pedagogical Styles 4: Cognitive Demands and Interpersonal Dynamics

- Appendix: Interdisciplinarity is the Utopian Dream of an academic culture whose time is rapidly running out

Introduction: Pedagogical Affordance, Entrepreneurial Action?

With roots extending into the final decades of the previous millennium, online learning has begun blossoming at the beginning of this one. These efforts are fueled by the romance of invention and discovery, the idealism of making knowledge widely available at low or even no cost to students, and the practical desire to achieve dramatic reduction in the per unit cost of instruction. Online learning is here to stay.

This working paper began as a series of posts to an online workshop: Connected Courses: Active Co-Learning in Higher Ed. The workshop is interested in a particular kind of online learning, co-learning, where much of the learning takes place in interactions among the students rather than in the interaction between the instructor and students, one by one. It quickly became obvious, however, that co-learning has been most extensively explored in certain kinds of courses, courses involving media and writing where the emphasis is on students building presentations of one sort or another rather than on describing, analyzing, and describing things.

The question arises, then, of how far can co-learning techniques be extended to other kinds of courses. Could classical mechanics be taught in this way? What about the economics of the ante-bellum South? Ultimately, the only way we’ll know is to try and see what happens.

But such exploration is best undertaken in the context of a framework for thinking about how instruction and learning are organized. That’s what these posts are about.

Jeff Bezos – Man in Space

Henry Blodget interviews Jeff Bezos for Business Insider:

HB: In addition to everything else we’ve talked about, you make rockets. You want to go into space. This is a proclivity that you share with fellow billionaires such as Elon Musk and Richard Branson. First of all, what is it about space that captivates you? Second, what are you doing that’s different? Third, just talk about how hard it is when you saw Richard have an accident that has set everybody back a long time. Talk about space. What’s the vision there?

JB: First of all, and most fundamentally, you don’t get to choose your passions. Your passions choose you. For whatever reason, when I was 5 years old, Neil Armstrong stepped onto the moon. I was imprinted with this passion for space and for exploration. I think it’s important. I could come up with lots of rational reasons why it’s important, and I really do believe them.

I think it’s probably a survival skill that we’re curious and like to explore. Our ancestors, who were incurious and failed to explore, probably didn’t live as long as the ones who were looking over the next mountain range to see if there were more sources of food and better climates and so on and so on.

We are really evolved to be pioneers. For good reason. New worlds have a way of — you can’t predict how or why or when — but new worlds have a way of saving old worlds. That’s how it should be. We need the frontier. We need the people moving out into space.

My vision is, I want to see millions of people living and working in space. I think it’s important. I also just love it. I love change. I love technology. I love the engineers we have. They’re brilliant. We have about 350 people there. We’re building a vertical takeoff, vertical landing vehicle. It takes off like a regular rocket, and it lands on its tail like a Buck Rogers rocket.

The initial mission is space tourism. We’re also designing an orbital vehicle. We just won a contract to provide the new engines for the new version of the Atlas 5, which is the most successful launch vehicle in history. That’s a Boeing-Lockheed joint venture. That vehicle uses Russian engines, and because of all the things that are happening in Ukraine and so on, that supply of engines has become less certain, so they want to switch away from a Russian-made engine and they chose [us] to provide that engine. It’s a very exciting endeavor. Great team. They’re just doing a wonderful job, and it’s fun.

Wednesday, December 17, 2014

Rereading Goethe’s Faust 4: Going Through My Notes

As the subtitle suggests, this doesn’t have much of anything to do with Faust, but I’m throwing it here anyhow. On the one hand, it has to do with thinking about where I am in life as I embark on my 68th year – my 67th birthday was a couple of weeks ago (December 7). And that’s why I’m rereading the text. Faust changed direction late in (his fictional) life, and that’s what I’m doing, with graffiti as a vehicle for that.

Or at least trying to.

* * * * *

What I hadn’t realized – or at any rate, I have no memory of having once know it – is that Goethe worked on this text his whole adult life. He started it as young man and finished it in his old age. Given that, I’d think a look at whatever manuscripts we’ve got would be fascinating. And one thing I’m thinking is that Faust’s change of life-direction surely must reflect Goethe’s own change in direction as he matured. Part II, after all, is his addition to the basic legend, which otherwise ends badly for Faust.

Why would I think that? Well, I’ve argued that Shakespeare’s late romances reflect a change in his outlook as he matured (in At the Edge of the Modern, or Why is Prospero Shakespeare's Greatest Creation?). If Shakespeare can do it, why then so could Goethe. And I’ve just read that Oedipus at Colonus is the product of Sophocles’ old age. Makes sense. This is a play, after all, in which Oedipus is valued for what he’s gone through in life, not tossed out in the wild as he was in Oedipus the King.

Sophocles: 497/496 to 406/405 BCE

Antigone: 441 BCE

Oedipus the King: 429 BCE

Oedipus at Colonus: 405-6? BCE

* * * * *

But what has this to do with me going through my notes this morning? I’ve been going through my files on cultural evolution and thinking about bringing my current set of posts – on the direction of cultural evolution – to a close. That will bring a certain closure to the work I’ve been doing throughout my career. Just what kind of closure, that’s tricky, and it’s a secondary matter at this point. I can get around to that in a later post.

For now, suffice it to say, it will bring closure. And a bit of redefinition, too. I’ve always thought of myself as a literary critic with a bunch of other interests. My degree, after all, is in English Lit, though I wrote this strange dissertation full of this other stuff from the cognitive sciences and comparative psychology – a lot of what has come to be called evolutionary psychology in the last two or three decades. It was a literary text, “Kubla Khan”, that set me on my initial lines of inquiry and, though my undergraduate degree is in Philosophy, for all practical purposes, literature became the focus of my undergraduate studies.

Literature could do that because, in those days (the 1960s), it was evolving toward a general study of human life and everything. Literary scholars had come to annex a pile of other disciplines and put them to work in service of literature – philosophy, history, psychology (mainly psychoanalysis), sociology/political science/economics (mostly Marxism of a humanistic sort, loosely conceived). If you wanted to make sense of it all – a Faustian quest which I certainly had as an undergraduate and even perhaps as a graduate student – then literature is what you ended up studying.

The Four-Fold Way through an Undergraduate Education

From my notes about a decade ago:

Though I haven’t really thought it through, it seems to me that a proper undergraduate education ought to give equal emphasis to four components:

1. Expression – Where we have the arts, music and dance, poetry, etc., and physical education.

2. General Education – Where students get a general view of how the world works, from physics through natural and human history.

3. Something Practical – Specialized education in something that would allow the student to earn a living.

4. Some Intellectual Specialization – Where the student can dig-in to something that particularly interests them.

On 1 and 4, some students would naturally gravitate toward an expressive discipline, e.g. fine arts painting. But they really ought to developed some specialized knowledge in an intellectual discipline, whether it’s one relevant to painting (like perceptual psychology) or not (such as microeconomics). And a student who naturally gravitates to a specialization in Medieval European history needs to develop expressive abilities as well.

And so one through all four.

I have the vague sense that this is descended a conversation I had with David Hays some years before that.

Tuesday, December 16, 2014

Scapegoating the American Way

I’ve got a new post up at 3 Quarks Daily, Free-Floating Anxiety, Teens, and Security Theatre. It continues with the same theme I explored in my previous 3QD post, American Craziness: Where it Came from and Why It Won’t Work Anymore. That isn’t what I was planning when I began thinking about the post in the middle of last week, but that’s what popped up Sunday morning when I started working on it.

Over the previous week I’d been blogging about danah boyd’s study of teens and the internet, It’s Complicated: The Social Lives of Networked Teens (Yale UP 2014). It seemed to me that she was developing an argument that intersected with the argument about displaced aggression and anxiety that I have derived from Talcott Parsons (“Certain Primary Sources and Patterns of Aggression in the Social Structure of the Western World,” 1947). On the one hand, teens have more or less been “forced” online by irrational restrictions placed on their movements on the physical world (and over-scheduling, a different phenomenon) and that adult fears about sexual predation online were exaggerated at the same time people weren’t sufficiently attentive to the real sources of sexual predation.

So, I decided to write a post that links boyd’s observations to mine on nationalist aggression and racism. In the current post I refine my statement of Parsons’ argument and use the American response to 9/11 as an example. On the one hand the nation has undertaken two destructive and expensive wars, that have failed to achieve their announced object, the elimination of terrorism, and at the same time we’ve created the Transportation Security Agency to conduct largely pointless searches of passengers boarding aircraft.

Vacuum Activity and Scapegoating

These actions strike me as being akin to what ethologists call vacuum activity: “innate, fixed action patterns of animal behaviour that are performed in the absence of the external stimuli (releaser) that normally elicit them. This type of abnormal behaviour shows that a key stimulus is not always needed to produce an activity.” In this case the issue isn’t so much the lack of an appropriate stimulus but the inability, for some reason, to identify the source of one’s aggressive impulses while at the same time feeling the need to act on them. So one chooses a convenient or culturally targeted object whether or not it is causally appropriate.

My point of course is that the possibility of such irrational action has a basis in our biology. In a sense that’s just a specific version of the truism that all behavior has some kind of biological basis; we are, after all, biological beings. But the behavioral patterns I’m examining aren’t widely appreciated, perhaps because we would prefer to continue our irrational behavior rather than dealing with real issues. In the context of such denial it is useful to point out that, really, this is how animals (can) behave. It’s not at all farfetched.

Monday, December 15, 2014

Pedagogical Styles 4: Cognitive Demands and Interpersonal Dynamics

I figure this will be my last post before wrapping the series, writing an introduction, and putting them all together in a PDF.

The important point is that I’ve changed my sense of what pedagogical parameters are. I started out thinking that they would be affordances or demands of subject matter that constrain how that subject matter can be packaged into courses. That’s what I had my attention on in the three posts on pedagogical styles:

- Pedagogical Styles 1: Coaching and Midwifery; URL: http://new-savanna.blogspot.com/2014/11/pedagogical-styles-1-coaching-and.html

- Pedagogical Styles 2: Lectures, and beyond…; URL: http://new-savanna.blogspot.com/2014/11/pedagogical-styles-2-lectures-and-beyond.html

- Pedagogical Styles 3: Courses I have taught (or taken); URL: http://new-savanna.blogspot.com/2014/11/parameters-of-pedagogy-3-courses-i-have.html

Thus, whether or not co-teaching is a practical pedagogical regime depends on the nature of the subject matter. And to some extent that’s certainly true. But in thinking these things through I’ve realized that we have to treat the interpersonal dynamics to students and teacher as an independent source of variation.

So the situation is more complex than I had imagined it to be. Surprise, surprise!

Dependent and Independent Variables

Let’s put this in terms of social science research design. Courses are being taught and you have some theory about how that goes. To test that theory you need to identify independent variables and dependent variables. Your theory then tells you that, given a certain configuration of one or more independent variables you’ll find a certain configuration of one or more dependent variables.

What that means is that, if the subject matter has certain affordances X1 and Y3 that it will be taught, say, as a lecture course. Correspondingly, if the subject matter has affordances X2 and Y1 then it will be taught, say, as a one-on-one tutorial. You then examine an appropriate selection of actual courses and see if that’s how things fall out.

What I’ve realized, though, is that cognitive affordances and interpersonal dynamics are, to some extent, independent of one another. This means that whatever the cognitive demands of a particular subject matter may be, one can independently have different conceptions of the proper relationship between teacher and student and student and student.

This independence was there in that initial post on coaching and midwifery. Learning how to play a musical instrument is very different from learning philosophy. Both can be taught one-on-one through coaching. But both can be taught one-to-many through lecture.

It should be obvious that philosophy can be taught one-to-many through lecture, because, after all, that’s how many philosophy courses are in fact taught. Very few philosophy students have their own personal Socrates, though advanced graduate training at its best may be a bit like that. Similarly, instrumental learning can be taught one-to-many through lecture, though this may not be so obvious. I’ve never actually attended a lecture of that sort. But I’ve watched master classes, where a hand full of students and one teacher when through their paces on stage. And the mass of music lessons online at, for example, YouTube operates like this.

So, it can be done. Still, the one-on-one philosophy tutorial is very different from a one-to-many philosophy lecture, and the same with learning a musical instrument. I note further that lecture courses in philosophy are generally accompanied by smaller discussion sections and also have the option of individual tutoring during office hours. Thus the actual instruction given is mixed-mode.

What this means is that I’m not going to be able to write a nice neat “wrap-up” post. Nor, for that matter, had I intended to do so. But I had intended to argue something like, “well, we’ve got this three or four distinct affordances and florg and wrap seem amenable to co-learning while krink and slam resist it.

I’m not going to be able to do that. But, having stated the issue, I will play around just a bit more.

Cultural Beings, the Ontology of Culture, and a Return to Books and Blues

I haven't forgotten my on-going series of posts on the direction of cultural evolution; you know, the one that started with Matt Jockers' Macroanalysis? But I've been busy with other things. Here's another post to add to that pile. I’m not yet burned out on culture, but lordy lordy I’m gettin’ there. But there’s a few more ideas I’ve got to get out there before I can hang up these particular shoes. If only for awhile.

* * * * *

What do I mean by cultural beings? To be honest, I’m not quite sure. Let’s start by being conservative about it – though just what “conservative” means amid this kind of intellectual craziness is a curious question – let’s say that novels, like those Jockers considered, are cultural beings. So are musical performances, like those driving American culture; they’re also cultural beings. Cultural beings are things like THAT, but note that THAT ranges over culture in general and not merely so-called high culture. After all, most of Jockers’ novels and most of those musical performances are not high cultural phenomena. Many are distinctly low and vulgar, while others are merely middlebrow.

I am using “cultural being” as a term of art. It designates not merely the cultural artifact, whether it is a long narrative imprinted in a codex, a musical composition inscribed on score paper, or even a performance merely floating in the air and then gone forever, except for memories of it. Those physical things are just packages or envelopes, other terms of art I’m hereby proposing. And those packages or envelopes “contain” coordinators, the cultural analog to biological genes.

When we read texts or listen to (even participate in) performances, the coordinator packages elicit phantasms in the mind/brain. It is the phantasm that gives pleasure, and so leads to a desire for repetition, or not, in which case the package that elicited it is forgotten. Those phantasms belong to cultural beings as well. If you will, the package of coordinators is the body of a cultural being while the phantasm is its soul.

The Ontology of Culture

When I talk about the ontology of culture, then, I mean these entities and the relations between them: cultural beings, packages or envelopes, coordinators, and phantasms. The relations between them are complex and subtle and I don’t pretend to grasp them, though I’ve been writing and thinking about the at least since my book on music, Beethoven’s Anvil, if not longer.

The overall relationship among them, however, is given by the evolutionary dynamic of blind variation and selective retention:

The evolution of cultural beings proceeds by blind variation among coordinators and selective retention of phantasms.

But what does it mean to retain a phantasm? Phantasms are (collective) mental events. They come and they go. How can they be retained?

They can’t. But they can be remembered and if the memory is compelling, one can re-create the phantasm. How do you do that? You re-experience the package of coordinators that gave rise to the phantasm in the first place. And so we have this modified formulation:

The evolution of cultural beings proceeds by blind variation among coordinators and selective retention of packages or envelopes.

Will that work? Will it do the job? I don’t know. I just thought it up.

Let us remember, however, that phantasms are the cultural analog to the biological phenotype. And what is retained in biological evolution is not the individual phenotypes. They all die and the matter of which they were composed rots. What’s retained is the phenotypic scheme, the Bauplan that emerges from a developmental process regulated by the genotype.

With that in mind, let’s move on.

Sunday, December 14, 2014

Saturday, December 13, 2014

Rereading Goethe’s Faust 3: Echoes of Fantasia and Mysteries of the Cosmos

What is this knowledge that Faust seeks?

Still on reset. The quick read-through that I decided upon last post has yet to happen, though I’ve gotten a bit further into the text, and there are hints that something like a quick read might just change my relationship to the text.

We’ll see.

Still, I’ve got some things to say.

Continuing from last time, I raised the question of just what is going on that Goethe needs to frame his story with a conversation between the Lord and Mephistopheles, a device he took from the Book of Job – something his audience surely would recognize. That connection alone tells us this is to be no ordinary story. Let me quote a passage from an old essay I wrote about Disney’s Fantasia:

In 1976 Edward Mendelson published an article on “Encyclopedic Narrative: From Dante to Pynchon” (MLN 91, 1267-1275). It was an attempt to define a genre whose members include Dante’s Divine Comedy, Rablais’, Gargantua and Pantagruel, Cervantes’ Don Quixote, Goethe’s Faust, Melville’s Moby Dick, Joyce’s Ulysses, and Pynchon’s Gravity’s Rainbow. Somewhat later Franco Moretti was thinking about “monuments,” “sacred texts,” “world texts,” texts he wrote about in Modern Epic (Verso 1996). He’d come upon Mendelson’s article, saw a kinship with his project, and so added Wagner’s Ring of the Nibelung’s (note, a musical as well as a narrative work), Marquez’s One Hundred Years of Solitude, and a few others to the list. It is in this company that I propose to place Fantasia.

There we have it, in the middle of that paragraph; Faust is an “encyclopedic narrative” in Mendelson’s terms, a “world text” in Moretti’s. It seeks to tell of, to embrace, the whole of the cosmos, and that is a very strange thing to attempt on any terms.

And that ambition is why, I would imagine, Goethe did not attempt to tell this story in novel form. As a formal device, novels are grounded in history, in the flow of events in real time; and furthermore, in the flow of events as seen by a particular narrator. Notice that the oldest novel on Mendelson’s list in Moby Dick, written a few decades after Faust. It’s as though the requisite novelistic technique didn’t exist in Goethe’s time. And, considering all that Melville had to invent and least harness to novelist use (e.g. the tedium of naturalistic cataloguing and exposition) it just barely existed in his time – which wasn’t so far from Goethe’s, though the two texts seem – at the moment at least – a universe apart in sensibility.

So, given his subject, his ambition – he was to work on this text for most of his life – Goethe couldn’t write a novel. He had to choose another form. Had film been available to him, that’s probably what he would have chosen. It would certainly have appealed to his polymath’s sensibilities. Instead, he chose the closet thing he could get to film, the theatre. And he opens his story – once the preliminary throat clearing [Jester, Manager, Poet] is over – with a conversation between the Lord and Mephistopheles from a place long ago far away around the corner in the future. They, or at least the Lord, is above it all, which is where you need to be to present the cosmos.

* * * * *

Which brings us to Fantasia, another of these epics that aims to present IT ALL. No doubt this juxtaposition of a great literary work, one central to The Western Canon! with a feature-length cartoon for kids of all ages, no doubt that’s a bit jarring. I can’t help that. Uncle Walt would have taken it in stride, and so, perhaps, would Cousin Johann.

Subscribe to:

Posts (Atom)