Wolfram has a very interesting account of how ChatGPT works, What Is ChatGPT Doing … and Why Does It Work? Toward the end he talks about “Meaning Space and Semantic Laws of Motion,” which is more or less something I’m thinking about in my current work on ChatGPT’s ability to tell stories. Here he talks of trajectories “in linguistic feature space”:

We discussed above that inside ChatGPT any piece of text is effectively represented by an array of numbers that we can think of as coordinates of a point in some kind of “linguistic feature space”. So when ChatGPT continues a piece of text this corresponds to tracing out a trajectory in linguistic feature space. But now we can ask what makes this trajectory correspond to text we consider meaningful. And might there perhaps be some kind of “semantic laws of motion” that define—or at least constrain—how points in linguistic feature space can move around while preserving “meaningfulness”?

As ChatGPT tells them, stories consist of a sequence of sentences. Those sentences are ordered by a story trajectory. The particular stories I’ve been working with follow a trajectory that seems to have five segments: Donné, Disturb, Plan, Enact, and Celebrate (Benzon 2023). But that’s an aside. Let’s return to Wolfram. Later, after presenting visual illustrations of words arrayed in “semantic space” Wolfram observes, “OK, so it’s at least plausible that we can think of this feature space as placing ‘words nearby in meaning’ close in this space.”

Yes, it is. In particular, he’s looking for a “fundamental ‘ontology’ suitable for a general symbolic discourse language?” That’s what this post is about.

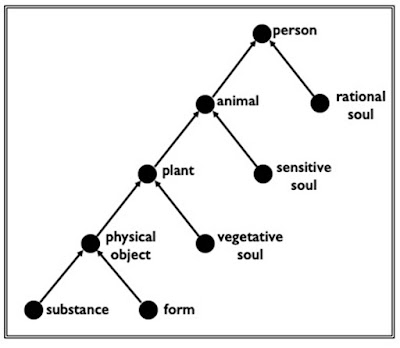

That fundamental ontology has a name, the Great Chain of Being (cf. Lovejoy 1936), though it has rarely been discussed under that rubric in linguistics and related disciplines. This is what a sketch of it looks like:

I have used the term “assignment” for the relationship being specified on the arcs (Benzon 1985, 2018).

Read the diagram from the bottom. A physical object consists of an assignment between a substance and a form. For a rock the substance is mineral and the form can be almost anything. For a cube of sugar the substance is sugar granules, and the form is, obviously, that of a cube. A plant consists of an assignment between a physical object and a vegetative soul (to use Aristotle’s terminology from De Anima). That is to say, plants can have any attributes characteristic of physical objects, visible form, characteristic textures, taste, odor, and so forth, but they also have attributes only applicable to living things, they grow, change form, and they die. Animals can have attributes of the kind characteristic of plants, plus new ones; they can move, hunt, sleep, see, hear, smell, etc. With humans you add still more attributes and capabilities.

Think about it for a minute or two. What kinds of verbs require humans as agents? Verbs about a whole range of mental and linguistic processes, no? Animals are not appropriate agents for such verbs, at least not in the commonest usages: Animals don’t think, or dream, or tell stories, ask questions, etc. But animals can walk, sleep, smell, see, feel pain, etc. And so can humans. The diagram is about capabilities and affordances, which are inherited up the diagram. Objects have weight, but so do plants, animals, and humans. But only humans can speak. Once you tease out the implications, it becomes apparent that assignment structure has very wide-ranging semantic implications.

When someone makes a statement the violates assignment structure, philosophers talk of category mistakes (Sommers 1963). Noam Chomsky’s most famous sentence, “Colorless green ideas sleep furiously,” is a contains two category mistakes and a contradiction. Ideas are not the kind of thing that can sensibly be said to sleep, or to have color. That there can be such a thing as “colorless green” is a contradiction. Wolfram offered his own collection of category errors packaged as a sentence: “Inquisitive electrons eat blue theories for fish.” Electrons can neither be inquisitive nor can they eat anything and theories, like ideas (of which they are a type) cannot have color. Nonetheless, ChatGPT is able to tell a story about them, which I have appended to this post.

Now consider this diagram:

It has a similar form, but is something I sketched out for the purely mechanical world of manufacturing. As in the previous diagram, the relationship between the nodes is that of assignment.

Again reading from the bottom, an object consists of a assignment between a material (comparable to substance in the previous diagram), a shape (comparable to form), and a surface (which would probably be appropriate to the previous diagram as well). Shape is the most complex of these aspects. The shape will have components, such as edges and vertexes or even component shapes. The substance of the part is the stuff of which the object is made; it has properties of mass, density, ductility, heat conductivity, etc. Finally, the surface must be considered separately from substance and shape because different types of process apply to it. The surface may be painted or plated, and/or ground to set specifications. This doesn't affect the shape or the nature of the substance.

We can continue on up: An assembly has a different ontological structure than a primitive part, which is to say than an assembly is not merely a complex part. There is more to it. An assembly is an assignment between a part and a connectivity structure. To think of an assembly as a part, first imagine shrinking an envelope around the assembly. The resulting shape/surface/substance triple is the assembly as part. Its shape and surface might be quite complex (think of an automobile engine as an assembly which is part of the automobile), and its substance heterogenous (e.g. rubber, plastic, three kinds of metal, etc.). This part is, of course, a complex part. As such it has components, the simpler (perhaps even primitive) parts which make it up. Simple objects do not have components, though their shapes do.

We can keep moving up. A mechanism is an assembly with articulated parts, thus allowing them to move. Add a source of power to the mechanism and you have an engine. Over there to the left we have a computer conceived of as an assembly with a program. That is no doubt way too simplified, but at this level of conceptual resolution it may be satisfactory.

What’s important about these diagrams is the relationship they establish between the objects in them: assignment. The ontological structure Wolfram is looking for consists of assignment structure. It warrants further exploration.

References

Benzon, William L. (1985) William Benzon, Ontology in Knowledge Representation for CIM, Computer Integrated Manufacturing Program, Center for Manufacturing and Technology Transfer, Rennselaer Polytechnic Institute, Doc.# CIMMMW85TR034, 1985. https://www.academia.edu/28723042/Ontology_of_Common_Sense.

Benzon, William L. (2018) Ontology in Cognition: The Assignment Relation and the Great Chain of Being, Working Paper. 5 pp., https://www.academia.edu/37754574/Ontology_in_Cognition_The_Assignment_Relation_and_the_Great_Chain_of_Being.

Benzon, William L. (2023) ChatGPT intimates a tantalizing future; its core LLM is organized on multiple levels; and it has broken the idea of thinking. Version 3. Working Paper, Feb. 6, 2023, 25 pp., https://www.academia.edu/95608526/ChatGPT_intimates_a_tantalizing_future_its_core_LLM_is_organized_on_multiple_levels_and_it_has_broken_the_idea_of_thinking_Version_3

Lovejoy, Arthur O. (1936). The Great Chain of Being: A Study of the History of an Idea. Harvard University Press. 1936. Available online, https://archive.org/details/ArthurO.LovejoyTheGreatChainOfBeing.

Sommers, Fred (1963) “Types and Ontology.” Philosophical Review. 72, 327 - 363.

Appendix: ChatGPT tells a strange story

What do you make of this sentence: Inquisitive electrons eat blue theories for fish.

As an AI language model, I can analyze the grammar and syntax of the sentence, but I am unable to determine its meaning or context as it appears to be a nonsensical sentence.

The sentence is grammatically correct, with a subject "inquisitive electrons" and a predicate "eat blue theories for fish." However, the sentence lacks coherence and meaning, as electrons do not eat and theories cannot be consumed like food. It appears to be a string of words without any logical connection or intended message.

It's possible that this sentence is an example of a nonsensical or absurd statement meant to be humorous or artistic. However, without more context, it is impossible to know for sure.

Could you spin a yarn that uses that sentence?

Certainly, here is a short story that uses the sentence "Inquisitive electrons eat blue theories for fish":

Once upon a time, in a distant corner of the universe, there was a planet where everything was made up of pure energy. The energy beings on this planet were fascinated by the mysteries of the universe and spent their days pondering the nature of existence.

One day, a group of inquisitive electrons set out on a journey to find the truth about the universe. They traveled through space and time, exploring distant galaxies and discovering new forms of energy. But despite their adventures, they could not find the answer they were seeking.

One night, as they were resting on the edge of a nebula, they noticed a strange blue glow in the distance. Curious, they made their way toward the light, hoping to discover something new. As they approached, they realized that the glow was coming from a group of energy beings who were discussing their theories about the universe.

The inquisitive electrons were fascinated by the blue theories, but they could not understand them. Suddenly, one of the energy beings offered the electrons a trade: they would teach the electrons their theories, and in return, the electrons would provide them with fish, a delicacy on the planet.

The electrons were intrigued by the offer and agreed to the trade. But as they began to devour the blue theories, they realized that they could not actually taste them. They felt silly and embarrassed for falling for such a silly trade, but they had learned an important lesson about the dangers of being too inquisitive.

From that day forward, the inquisitive electrons continued their journey, searching for answers but always wary of falling for foolish trades. And on occasion, when they encountered new energy beings, they would recount the tale of how they once tried to eat blue theories for fish, and they would all share a laugh.

Some 45 years ago, when I was first thinking about sound symbolism and its possible application to natural language AI one of the hypotheses I came up with was that the primitive notions defined by phonosemantic mappings to the phonological segments also might be operators in the system that manipulated them. That is, if a phoneme made reference to what could be construed as parallel processing, then it could also be utilized as an instruction to process information in parallel. Etc. One of my undergrad professors said I was implying a 'physical symbol system' (though in my case these are more iconic than symbolic). I don't know enough about such physical symbol systems to know whether they could apply to computational linguistic analysis.

ReplyDeleteAs for the story trajectory, some years back while analyzing the big Thompson River Salish Dictionary I noticed something very interesting. Roots and affixes in this language (and in other Interior Salish, probably reconstructable to Proto-Salish) are labeled as weak, strong, etc. Strong morphemes attract stress, while the others donate it to the strong ones. It turned out that the different stages of story telling correspond to stages of tasks- such as planning, gathering of materials, shaping them for construction, finishing, utilization, repair/storage/discarding and so on. And these were all marked by different strengths of the morphemes. I've never seen anything like this in any other language family.

ReplyDelete